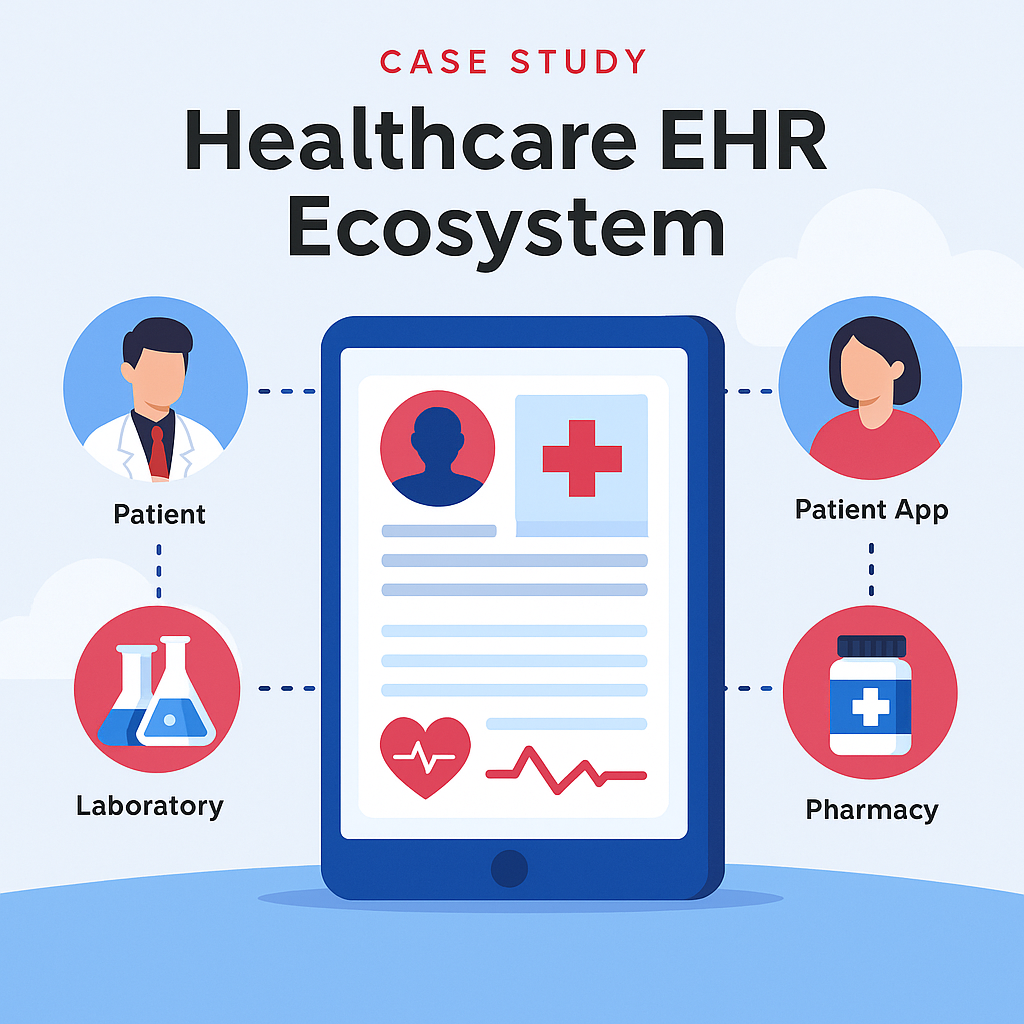

Case Study — Healthcare EHR Ecosystem

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

I use animation as a third dimension by which to simplify experiences and kuiding thro each and every interaction. I’m not adding motion just to spruce things up, but doing it in ways that.

Qualitative Research, Quantitative Research, Heuristic Evaluation, Competitor Analysis, Usability Testing

We make tailor-made user acquisition to increase business growth for you to uncover all the potential opportunities!

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

A healthcare enterprise dedicated to building a comprehensive electronic health record (EHR) ecosystem that securely connects doctors, patients, labs, and pharmacies for seamless healthcare management and research access.

The project focused on developing a centralized EHR platform designed to unify medical data across multiple entities—enabling real-time access, better diagnostics, and improved patient outcomes.

The system integrates AI analytics, interoperability standards, and a secure data exchange framework to streamline medical operations.

Duration: 8–10 months

Technologies: Python, FastAPI, PostgreSQL, Azure Cloud, Power BI, HL7/FHIR APIs, LangChain, OpenAI API

Doctor Portal: Access patient records, prescriptions, and diagnostic history

Patient App: View medical records, appointments, and lab results

Lab Integration: Automated report uploads and result synchronization

Pharmacy Module: Prescription verification and fulfillment tracking

Analytics Dashboard: Real-time insights for care outcomes and research

Data Security: Role-based access, encryption, and audit logging

As the AI Consultant and Solution Architect, I led the end-to-end architecture of the ecosystem, integrating multiple healthcare modules into a single interoperable platform.

I implemented data standardization via HL7/FHIR, designed AI-driven analytics dashboards for patient outcomes, and optimized interoperability for scalability and compliance.

The platform was engineered for high security, modular expansion, and long-term data retention, supporting both clinical operations and academic research.

Industry: E-Commerce & Retail Technology

Services: AI Consulting, Solution Architecture, Product Strategy

Engagement: Concept → Development → Market Launch

Industry: E-Commerce & Retail Technology

Services: AI Consulting, Solution Architecture, Product Strategy

Engagement: Concept → Development → Market Launch

A halal-focused e-commerce startup committed to promoting ethical and Sharia-compliant products through AI-driven product verification, recommendation, and consumer trust systems.

The initiative aimed to develop an AI-powered halal commerce platform that verifies product authenticity, categorizes halal-compliant items, and personalizes the user shopping experience.

The system combined AI classification models, data validation pipelines, and intelligent recommendation engines to ensure accuracy, transparency, and scalability across multiple product categories.

Duration: 6–9 months

Technologies: Python, FastAPI, OpenAI API, LangChain, PostgreSQL, Power BI, AWS Cloud, Streamlit / React

Halal Verification Engine: Uses AI to validate product data against certification standards

Recommendation System: Provides personalized suggestions based on user interests and verified compliance

Smart Search: AI-driven search and product categorization using NLP

Vendor Dashboard: Allows merchants to manage, verify, and update halal certifications

Consumer Interface: Intuitive UI for browsing, purchasing, and reviewing halal-certified products

As the AI Consultant and Solution Architect, I designed the platform’s intelligent backend to automate halal certification validation and product categorization.

I implemented AI classification pipelines to process product metadata, integrated API-based verification with halal authorities, and developed a personalized recommendation engine powered by user behavior analytics.

The result was a trusted, AI-first marketplace that blends compliance with modern user experience.

Industry: Public Sector & Nonprofit Technology

Services: AI Consulting, Product Management, Solution Architecture

Engagement: Ideation → Design → Implementation

Industry: Public Sector & Nonprofit Technology

Services: AI Consulting, Product Management, Solution Architecture

Engagement: Ideation → Design → Implementation

A nonprofit-focused organization seeking to streamline the creation of AI governance policies and ensure responsible AI adoption across donor-funded projects and social-impact initiatives.

The goal was to build an AI Policy Builder platform that empowers nonprofits to craft, customize, and adopt ethical AI policies with ease.

The system guides users through a structured framework of templates, recommendations, and risk assessments—helping organizations establish compliance and transparency.

Duration: 4–6 months

Technologies: Python, FastAPI, PostgreSQL, Streamlit, LangChain, OpenAI API, Azure Cloud, Power BI

Policy Builder Wizard: Step-by-step guidance to create AI policies aligned with global standards

Template Library: Predefined and editable templates for governance, data usage, and ethics

AI Assistant: Suggests best practices based on organization type and AI maturity

Collaboration Tools: Enables multi-user editing and approval workflows

Dashboard: Tracks completion status, version control, and compliance metrics

As the AI Consultant and Product Architect, I led the platform’s full lifecycle—from conceptual framework to technical design and deployment.

I designed the modular policy engine, enabling customization for different NGO types, and integrated an LLM-driven recommendation system to provide context-aware policy suggestions.

The solution combined structured templates with dynamic AI guidance, ensuring nonprofits could easily create compliant and transparent AI policies.

Industry: Agriculture & AgriTech

Services: AI Consulting, Computer Vision, Automation Design

Engagement: Research → Development → Field Testing

Industry: Agriculture & AgriTech

Services: AI Consulting, Computer Vision, Automation Design

Engagement: Research → Development → Field Testing

An agri-tech initiative aiming to enhance crop productivity and reduce manual dependency through AI-driven monitoring, automation, and smart decision-making systems for farmers and agri-businesses.

The project involved developing an AI-powered agriculture automation platform that utilizes computer vision, IoT sensors, and predictive analytics to monitor crop health, detect diseases, and optimize irrigation and fertilizer usage.

The system provided real-time insights, helping farmers make data-backed decisions and improve yield efficiency.

Duration: 6–8 months

Technologies: Python, OpenCV, TensorFlow, Scikit-learn, IoT Sensors, Drone Imagery, Node-RED, AWS Cloud, Power BI

Crop Disease Detection: Identifies plant diseases using image recognition and pattern analysis

Soil & Weather Monitoring: Integrates IoT sensors to analyze soil moisture and weather data

Irrigation Automation: Smart control systems adjust watering schedules based on predictive models

Yield Forecasting: Uses AI to predict crop yield and optimize resource allocation

Farmer Dashboard: Displays real-time insights, alerts, and productivity metrics

As the AI Consultant and Solution Architect, I led the creation of an integrated AI ecosystem combining IoT devices, image analytics, and predictive algorithms.

I designed workflows that linked drone imagery with sensor data, enabling precision farming through actionable intelligence.

The architecture was cloud-based, scalable, and capable of adapting to different crop environments and climates.

Industry: Healthcare & Medical IoT

Services: AI Consulting, Solution Architecture, Product Development

Engagement: Concept → Prototype → Pilot

Industry: Healthcare & Medical IoT

Services: AI Consulting, Solution Architecture, Product Development

Engagement: Concept → Prototype → Pilot

A health-tech startup focused on leveraging AI and IoT to monitor and detect respiratory diseases such as asthma and COPD through real-time spirometry data.

The objective was to develop an AI-powered spirometer system that could measure lung performance, detect early signs of respiratory issues, and transmit data to healthcare providers for continuous monitoring.

The solution combined IoT sensor technology, AI analytics, and cloud integration to create a scalable, connected health ecosystem.

Duration: 5–7 months

Technologies: Python, TensorFlow Lite, Scikit-learn, Node-RED, MQTT, AWS IoT, Firebase, Streamlit, Power BI

IoT Data Capture: Real-time airflow and pressure readings via spirometer sensors

AI Analytics: Machine learning algorithms for anomaly detection and health scoring

Dashboard: Visualize breathing trends, lung capacity, and historical reports

Mobile App Integration: Remote access for patients and doctors

Cloud Sync: Secure data transmission for research and multi-device tracking

As the AI Consultant and Solution Architect, I led the architecture and integration of the end-to-end IoT + AI system.

I developed the data pipeline connecting hardware sensors to AI inference models and implemented predictive algorithms for early disease identification.

The system adhered to healthcare compliance standards (HIPAA-ready) and enabled remote diagnostics through real-time data analytics.

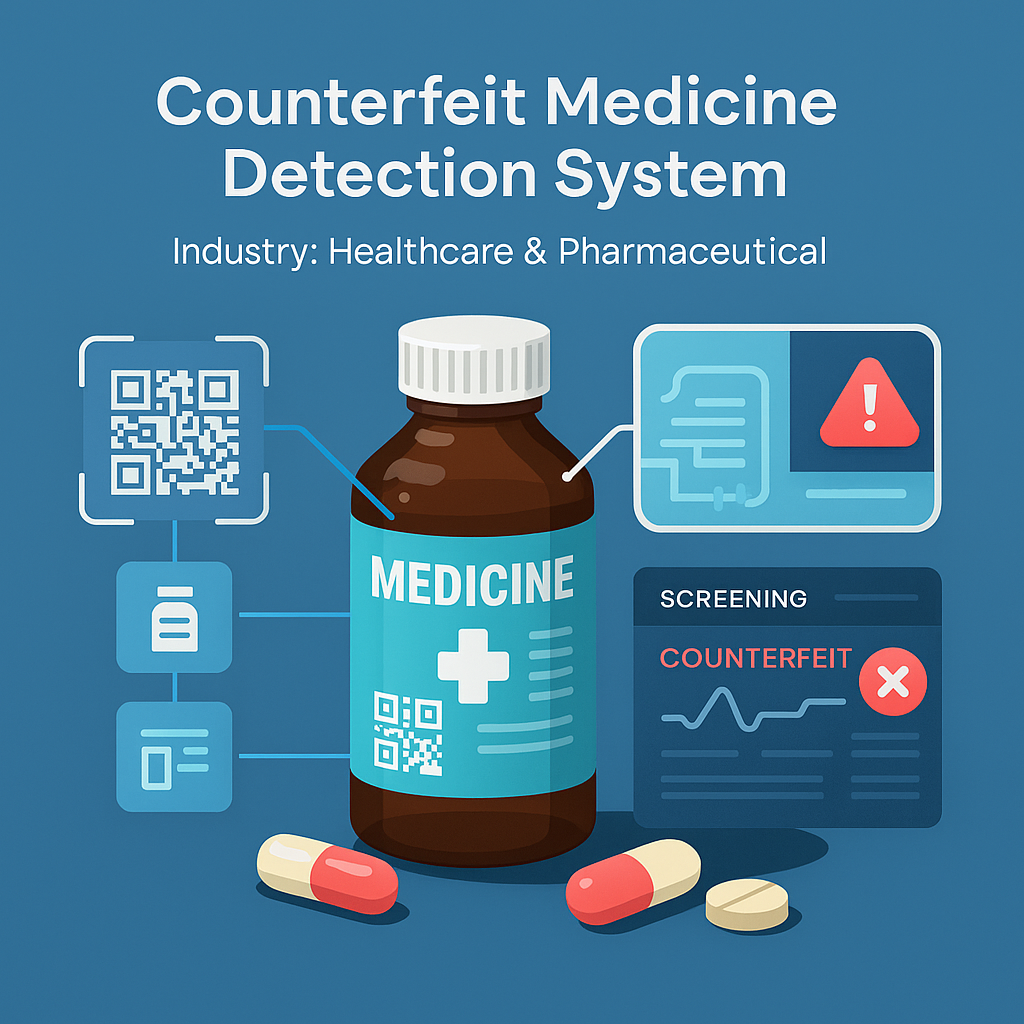

Industry: Healthcare & Pharmaceutical

Services: AI Consulting, Computer Vision, Product Development

Engagement: Research → Prototype → Validation

Industry: Healthcare & Pharmaceutical

Services: AI Consulting, Computer Vision, Product Development

Engagement: Research → Prototype → Validation

A healthcare technology initiative focused on improving drug authenticity verification and public safety by leveraging AI-driven detection and supply-chain transparency.

The project aimed to design an AI-based counterfeit medicine detection system capable of identifying fake pharmaceutical products before they reach patients.

The platform analyzed packaging, labeling, QR codes, and chemical patterns using AI and computer vision to ensure authenticity during distribution.

Duration: 6–8 months

Technologies: Python, TensorFlow / PyTorch, OpenCV, YOLOv5, FastAPI, AWS S3, PostgreSQL, Pandas, NumPy

Image Analysis: Detect inconsistencies in labels, texture, and holograms using deep learning

Barcode & QR Validation: Verify serialization and product metadata against trusted databases

Supply Chain Integration: Real-time verification for pharmacies and distributors

Dashboard: Display analytics, detection reports, and risk scores

Mobile Interface: Allow on-the-spot verification via smartphone camera

As the AI Solution Architect and Consultant, I designed and implemented the entire detection pipeline — from image preprocessing to deep learning classification.

Using YOLOv5 for object detection and CNN-based models for feature validation, the system achieved high precision in distinguishing authentic vs counterfeit medicines.

The architecture was modular, scalable, and cloud-hosted, ensuring fast inference and easy integration with pharmacy systems.

Gained a strong foundation in programming, algorithms, databases, and software engineering with a focus on applied computing and innovation.

Certified Entrepreneur – Causation Business Model. Learned startup development, innovation, and strategic business planning through real-world entrepreneurial frameworks.

Hands-on professional training in applied AI, data preprocessing, model building, and deployment of machine learning solution

Studied strategy, finance, and operations with a focus on the effectuation business model for startups and growth ventures.

Certified in data analysis, visualization, and insights generation using spreadsheets, SQL, and data storytelling techniques.

Completed Effectuation: Lessons from Expert Entrepreneurs — exploring decision frameworks used by top entrepreneurs globally.

Selected as a Fellow & Scholar in Applied Data and AI Innovation, focusing on data equity, AI ethics, and product scalability.

Recognized for leadership in entrepreneurship and innovation—demonstrating expertise in business design, product execution, and AI-driven growth.

Defined and delivered AI solutions, including semantic search and AI chatbots for banking and enterprise automation.

Worked as an AI Solution Architect on the Counterfeit Medicine Detection System, designing AI pipelines to identify fake pharmaceuticals with 95% accuracy. Mentored global fellows in AI innovation, data equity, and applied research projects.

Led end-to-end AI implementation and developed the AI Policy Builder for nonprofits, improving workflow efficiency by 40%.

Directed enterprise AI projects — implemented donor analytics and e-commerce AI boosting engagement and sales.

Built an AI-powered education platform integrating metaverse learning and adaptive content systems.

Developed adaptive AI learning models and translated technical systems into business impact.

Enhanced lead generation by 25% through predictive data analytics and marketing optimization.

Automated data scraping systems, expanding product databases by 200% and reducing update time by 50%.

Founded a digital health startup improving EHR access and patient data management efficiency by 50%.

Maecenas finibus nec sem ut imperdiet. Ut tincidunt est ac dolor aliquam sodales. Phasellus sed mauris hendrerit, laoreet sem in, lobortis mauris hendrerit ante. Ut tincidunt est ac dolor aliquam sodales phasellus smauris test

Maecenas finibus nec sem ut imperdiet. Ut tincidunt est ac dolor aliquam sodales. Phasellus sed mauris hendrerit, laoreet sem in, lobortis mauris hendrerit ante. Ut tincidunt est ac dolor aliquam sodales phasellus smauris

Maecenas finibus nec sem ut imperdiet. Ut tincidunt est ac dolor aliquam sodales. Phasellus sed mauris hendrerit, laoreet sem in, lobortis mauris hendrerit ante. Ut tincidunt est ac dolor aliquam sodales phasellus smauris

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

1 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

2 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

8 Plugins/Extensions

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

5 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

5 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

50 Plugins/Extensions

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

10 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

20 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

100 Plugins/Extensions

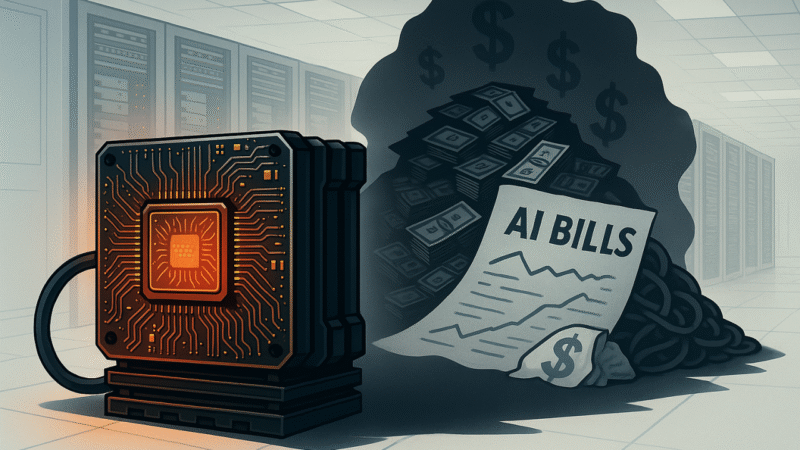

In the high-stakes world of banking and finance, every penny counts, and speed is paramount. Yet, an invisible money pit is quietly draining resources from even the most forward-thinking institutions, the seemingly indispensable GPU. While some banks boast about their internal AI agents, a critical question is emerging: Are they unwittingly bleeding cash on GPU infrastructure when a more cost-effective, equally powerful alternative exists? The answer is a resounding YES, and the solution lies in the rise of GPU-free AI for inference, poised to revolutionize how financial institutions deploy intelligent agents, slashing operational costs without sacrificing performance or compliance.

The Brief Breakdown:

It’s a common misconception that AI equals GPUs. For years, the computational muscle required to train complex AI models, especially large language models (LLMs) and deep learning networks, has undeniably resided in Graphics Processing Units. Their parallel processing capabilities make them ideal for crunching massive datasets and iteratively refining model parameters.

However, the picture changes dramatically when we talk about AI inference. Inference is the process of putting a trained AI model to work – using it to make predictions, analyze data, or power decisions in real-time. Think of it as the difference between building a car (training) and driving it (inference). While building a high-performance car requires specialized tools and heavy machinery, driving it for everyday tasks doesn’t.

This is where the financial sector’s current GPU dependency for AI agents is becoming an unsustainable burden. We’ve seen banks invest heavily in GPU farms for their internal AI agents, often overlooking a critical distinction: most AI agent tasks in banking are inference-heavy, not training-heavy.

The Unseen Costs of GPU Overkill in Banking:

The Real Solution: Unleashing the Power of CPU-Based AI Agents

The paradigm shift is happening now. Advances in CPU architecture and, crucially, massive leaps in AI model optimization are making CPU-only inference not just possible, but preferable for a vast majority of financial AI agent use cases.

Here’s why GPU-free AI agents are the game-changer for banking and finance:

2. Unmatched Ubiquity and Scalability:

3. Pioneering Software Optimization for Inference:

Real-World Impact and Use Cases: Cutting the AI Bill

The shift to GPU-free AI inference translates directly into tangible cost savings across various sectors:

Companies like Ampere Computing are at the forefront, advocating for CPU-centric approaches to AI inference, highlighting the energy efficiency and cost advantages, particularly as models become more specialized and refined for specific tasks rather than requiring a “supercomputer” for every prediction. Intel and VMware are also collaborating to enable scalable and efficient AI operations on CPU-driven infrastructure, even for tasks like LLM inference, by leveraging technologies like Intel’s Advanced Matrix Extensions (AMX).

Imagine AI agents deployed across a bank, performing critical tasks without the GPU overhead:

Leading the charge, institutions like Bank of America with its AI assistant Erica, and Capital One with Eno are processing millions of customer interactions daily. These sophisticated virtual assistants exemplify the massive scale of AI inference workloads in finance, from answering balance inquiries and tracking spending to flagging potential fraud and providing personalized financial insights. Each of these interactions, while seemingly simple, represents a complex AI decision point.

For an AI agent like Erica or Eno, optimizing their underlying models for CPU-based inference means that every single customer query or proactive alert can be processed at a significantly lower operational cost. Multiply that by billions of interactions annually, and the savings from shedding expensive GPU reliance become monumental, directly impacting the bank’s bottom line.

Specifically, let’s look at key areas where GPU-free AI agents deliver:

These are not future aspirations; these are capabilities being deployed today by forward-thinking institutions who have recognized the GPU inference trap. They are embracing the power of optimized, CPU-driven AI.

The Path Forward for Financial Institutions:

Conclusion: Beyond the Hype, Towards Sustainable AI

The banking and financial sector stands at a pivotal moment. The allure of AI’s transformative power is undeniable, but the associated costs, particularly from an overreliance on GPUs for inference, can cripple even the most ambitious initiatives. The real challenge is not just adopting AI, but adopting it intelligently and sustainably.

By shifting focus to GPU-free AI agents for inference, banks can unlock unprecedented operational efficiencies, drastically cut costs, and accelerate their digital transformation. This isn’t just about saving money; it’s about building a future where AI is pervasive, powerful, and economically viable, truly solving real-world challenges in a hyper-competitive, regulated industry. The era of GPU-free AI is here, and for financial institutions, ignoring it is a luxury they simply can not afford.

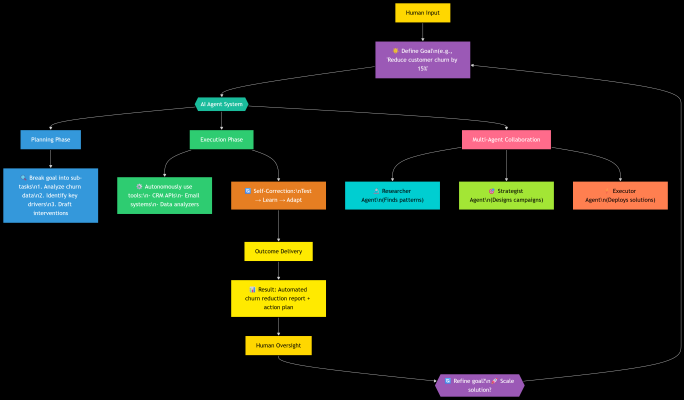

Imagine asking a colleague to “plan your vacation.” You don’t micromanage them—you trust them to research flights, book hotels, and adjust plans if flights get delayed. An AI Agent works the same way.

Unlike tools like ChatGPT (which responds when prompted), an AI Agent:

In short: AI Agents don’t just answer—they act.

How Do They Work?

Think of an AI Agent as a self-driving car for tasks:

Real-World Analogy:

Why Now? The Tipping Point.

Three seismic shifts enabled agents:

Early Examples You’ve Seen:

The Future – Where Agents Are Heading?

We’re entering the Age of Agentic Ecosystems, where:

Specialized agents collaborate:

Impact: Cut product launch cycles from months → days.

“The factory of the future will have only two employees: a person and a dog. The person’s job is to feed the dog. The dog’s job is to stop the person from touching the machines.” — With AI Agents, this isn’t a joke. It’s a strategy.

AI Agents won’t replace humans—they’ll redefine our potential. The most successful leaders won’t fear autonomy; they’ll harness it to solve problems we once thought impossible.

“We spent 50 years teaching machines to think. Now, we teach them to do.”

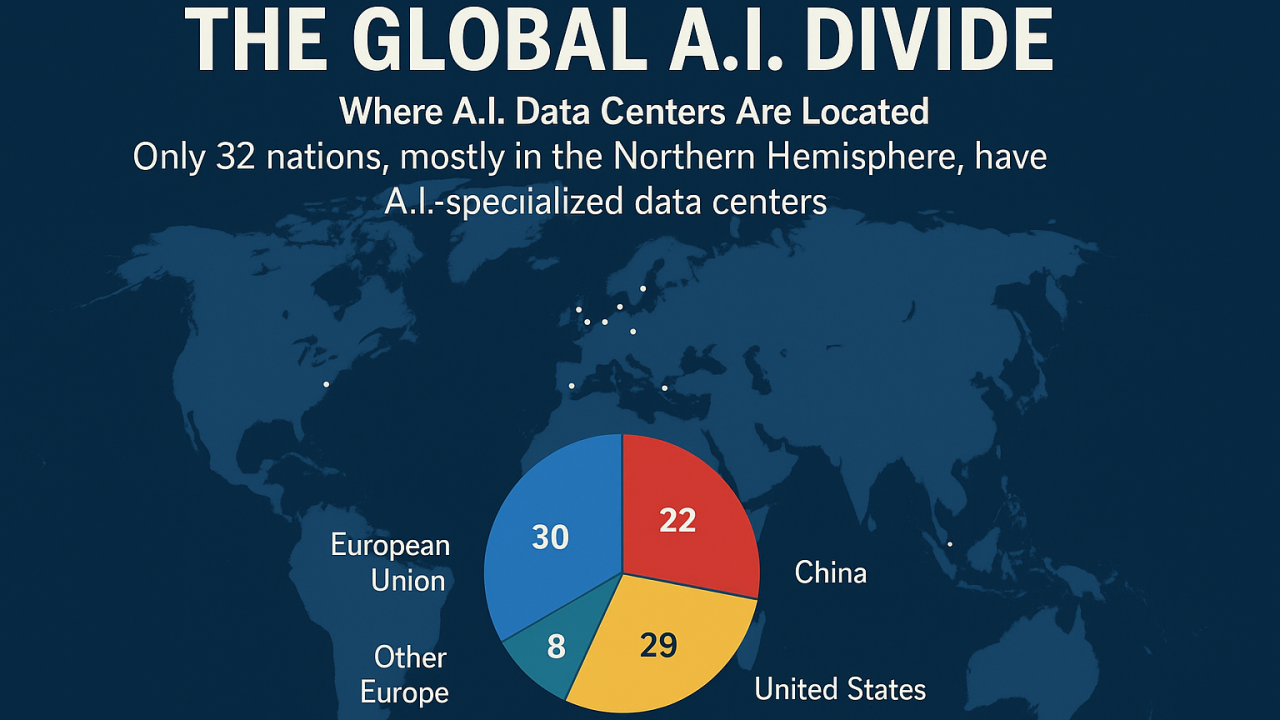

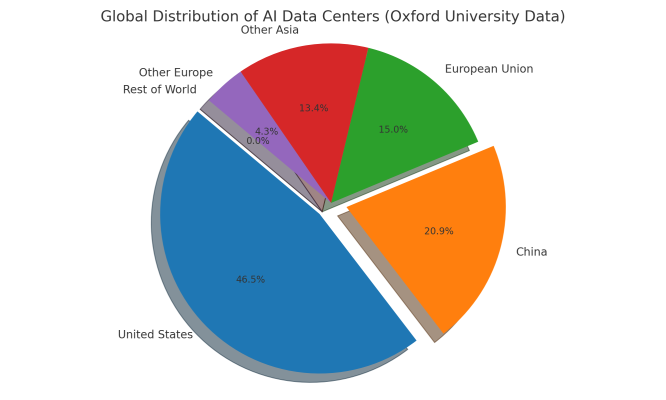

As artificial intelligence transforms everything from healthcare and scientific discovery to national security and digital sovereignty, a clear truth has emerged: the world is dividing into two groups—those with AI compute power, and those without.

Only 32 countries, mostly in the Northern Hemisphere, currently host AI-specialized data centers—critical infrastructure for training large-scale models, supporting local innovation, and enabling scientific discovery. Over 150 countries, spanning much of Africa and South America, are completely excluded from this landscape.

“We are losing,” says Nicolás Wolovick, an Argentinian researcher operating a small-scale AI lab in a converted classroom—an echo of the struggles faced across the Global South.

The result? An AI-powered compute divide, widening disparities in research capability, economic opportunity, and cultural representation.

The U.S. and China dominate global AI infrastructure, controlling over 90% of AI data centers, with companies such as Microsoft, Google, Amazon, Tencent, and Alibaba leading the expansion efforts. These giants also control access to Nvidia GPUs, the gold standard for cutting-edge AI. Countries lacking local computing capabilities must rely on distant cloud services, a costly workaround that introduces latency issues, legal complexities, and data sovereignty risks.

Saudi Arabia is making a bold leap into the AI computing space:

These efforts mark a significant shift: Saudi Arabia is moving from a consumer to a creator of AI infrastructure, emphasizing compute sovereignty and global competitiveness.

Without compute parity, countries face many risks:

Saudi Arabia’s strategy—massive investment, green energy integration, and multi-partner contracts—could serve as a model for other emerging economies aiming for AI parity.

Bridging the compute gap requires bold, coordinated efforts:

AI leadership must be shared—it cannot be siloed. The rise of Saudi Arabia’s compute infrastructure via Humain marks a turning point. For AI to stay a global, inclusive movement, nations must invest in infrastructure, partnerships, and policies to distribute compute power fairly.

Let’s support a future where compute power is a right, not a privilege—and where innovation thrives everywhere. 🌍🚀

I am available for freelance work. Connect with me via and call in to my account.

Phone: (555) 345 678 90 Email: admin@example.com