In 2025, AI isn’t just hype — it’s here.

It’s screening job applicants. Approving loans. Diagnosing diseases. Shaping criminal justice decisions.

But here’s the truth no one likes to admit:

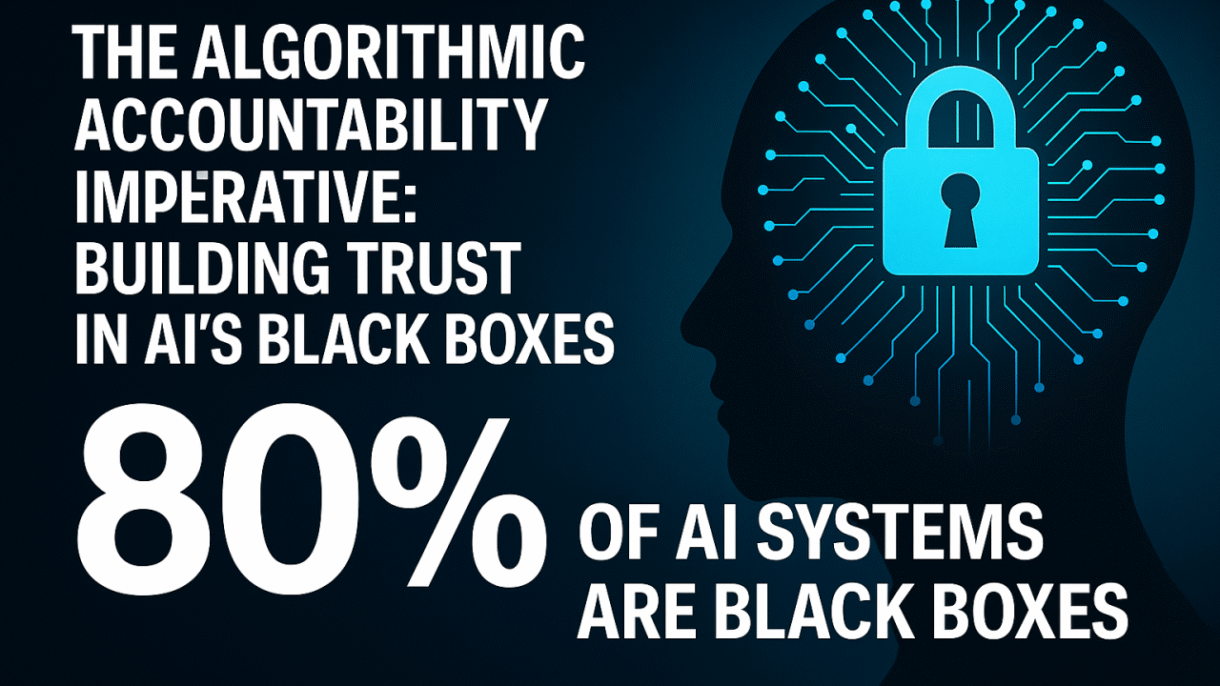

Most of us — even experts — don’t actually know how these AI systems make decisions.

We’re entrusting major life outcomes to “black box” models whose logic is invisible to the people they impact. This isn’t science fiction — it’s a crisis of trust unfolding right now. And it’s costing individuals, businesses, and governments more than we realize.

⚠️ The Real Risk: When Bias Goes Unchecked

Behind every AI system is data. And behind data are people — with their histories, preferences, and biases.

🔸 A hiring AI that favors certain universities

🔸 A medical AI trained mostly on male patients

🔸 A credit scoring model that penalizes zip codes

These aren’t hypothetical. They’re real-world examples of algorithmic bias in action. And when systems lack transparency, we don’t even realize when discrimination is happening — until it’s too late.

🎯 Why it matters:

- Unfair Outcomes: Bias gets coded into decisions that impact lives.

- Zero Accountability: People can’t appeal or understand AI-driven decisions.

- Regulatory Exposure: Companies face growing legal and ethical scrutiny.

- Loss of Public Trust: Without trust, AI adoption slows — innovation stalls.

✅ The Way Forward: Algorithmic Accountability

The good news? This isn’t a hopeless problem.

We don’t need to stop using AI — we need to build it responsibly. That starts with algorithmic accountability: a set of practices that make AI systems explainable, auditable, and fair.

Here’s how:

🧠 1. Explainable AI (XAI) Is Not Optional

For Engineers: Build models that provide transparent reasoning by leveraging tools such as:

- Model-agnostic techniques (e.g., LIME, SHAP)

- Feature importance charts

- Counterfactual examples (“what would need to change for a different result?”)

For Business Leaders: Don’t settle for “black box” vendors. Ask:

- Can we understand and explain the decisions made by this system?

- Is this interpretable by our legal and compliance teams?

Bottom line: Trustworthy AI is explainable AI.

⚖️ 2. Bias Detection & Mitigation at Every Stage

For Data Scientists: Audit datasets for imbalance. Test models with fairness metrics. Use diverse training data. Monitor in real-time post-deployment.

For Executives: Invest in diverse data teams. Create incentives for ethical data practices. Make fairness a KPI, not a “nice-to-have.”

Garbage in = garbage out. Bias starts at the data level.

🤝 3. Keep Humans in the Loop

For Developers: Design AI systems with checkpoints where human review can override or validate decisions. Build intuitive dashboards for transparency.

For Operations & Compliance Teams: Establish protocols: When must a human review be involved? Train teams to question the system — not blindly follow it.

AI is powerful — but it should never replace human judgment where ethics are involved.

🏛️ 4. Embrace Emerging Standards & Regulations

For Technical Experts: Collaborate with open-source AI ethics communities. Contribute to evolving standards.

For Policymakers & Leaders: Support regulations like:

- GDPR & data privacy frameworks

- Algorithmic transparency acts

- AI audit requirements

Regulation isn’t the enemy of innovation — it’s the foundation of responsible scaling.

🛤️ The Path Forward: Collaboration Is Key

Solving this doesn’t fall on any one group. It’s a shared responsibility:

- 🔧 Developers must build with ethics and transparency in mind

- 💼 Business leaders must demand accountability and allocate resources

- 🏛️ Governments must legislate and enforce fairness

- 🌍 The public must stay informed and ask questions

AI is the most powerful tool of our time. But its real value won’t come from complexity — it will come from trust.

💬 Over to You:

Would you trust an AI system to make a decision about your job, health, or finances — today? What do you think is the most important step in building ethical, explainable AI?