Hi, I’m Michael

Web designer and developer working for envato.com in Paris, France.

My Experience

Software Develop.

Co-Founder

Microsoft Corporation

Web Design.

Founder, XYZ IT Company

Reinvetning the way you create websites

Teacher and Developer

SuperKing LTD

Sr. Software Engineer

Education

BSc in Computer Science

University of DVI

New Haven, CT ‧ Private, non-profit

AS - Science & Information

SuperKing College

Los Angeles, CA 90095, United States

Secondary School Education

Kingstar Secondary School

New Haven, CT ‧ Private, non-profit

My Resume

Education Quality

BSC in Computer Science

Islamabad, Pakistan (2014 - 2018)Gained a strong foundation in programming, algorithms, databases, and software engineering with a focus on applied computing and innovation.

Entrepreneurship Program (LUMS)

Lahore, Pakistan (2017)Certified Entrepreneur – Causation Business Model. Learned startup development, innovation, and strategic business planning through real-world entrepreneurial frameworks.

AI & Machine Learning — DICE Analytics

Pakistan (2020)Hands-on professional training in applied AI, data preprocessing, model building, and deployment of machine learning solution

Mini MBA — IBA Karachi

Karachi, Pakistan (2021)Studied strategy, finance, and operations with a focus on the effectuation business model for startups and growth ventures.

Google Certified Data Analyst

Global — 2021Certified in data analysis, visualization, and insights generation using spreadsheets, SQL, and data storytelling techniques.

University of Virginia, Darden Business School

USA (Online) — 2023Completed Effectuation: Lessons from Expert Entrepreneurs — exploring decision frameworks used by top entrepreneurs globally.

Equitech Future / Applied Data Institute Fellowship

Chicago, USA (Online) — 2023Selected as a Fellow & Scholar in Applied Data and AI Innovation, focusing on data equity, AI ethics, and product scalability.

Global Certified Entrepreneur

Global — 2024Recognized for leadership in entrepreneurship and innovation—demonstrating expertise in business design, product execution, and AI-driven growth.

Design Skills

- Design Thinking & Innovation Strategy

- AI and Data Consultant

- Entrepreneurship & Venture Building

- Startup Mentorship & Advisory

- Data Visualization & Dashboard Design

- AI Product Interface Design (UX/UI)

- Workflow & Automation Design

- Information Visualization

- Presentation & Communication Design

- Power BI / Tableau / Google Data Studio

- Canva / Figma / Adobe XD

- Notion / Airtable / Zapier

Development Skills

- Python

- SQL

- Machine Learning & Algorithms

- Deep Learning

- Natural Language Processing (NLP)

- Generative AI / LLMs / AI Agents

- Data Wrangling & Cleaning

- Data Modeling & Preprocessing

- API Integration & Automation

- FastAPI / Streamlit / Flask

- RAG (Retrieval-Augmented Generation)

- LangChain / LlamaIndex

- AI Chatbots & Agents Development

- Cloud Deployment (Azure / GCP / AWS)

- Power BI Scripting & DAX

- Google Analytics / Data Tools

- Version Control (Git / GitHub)

- Project Management & Agile Methodology

Job Experience

AI Consultant | AI Solution Architect

SecureMax — Riyadh, KSA (Jun 2024 – Present)Defined and delivered AI solutions, including semantic search and AI chatbots for banking and enterprise automation.

AI Solution Architect & Fellowship Scholar

Equitech Future / Applied Data Institute — Chicago, USA (2023 – Present)Worked as an AI Solution Architect on the Counterfeit Medicine Detection System, designing AI pipelines to identify fake pharmaceuticals with 95% accuracy. Mentored global fellows in AI innovation, data equity, and applied research projects.

AI Product Manager | AI Architect & Implementation Specialist

Savvy Virtual Solution — North Carolina, USA (Jan 2024 – Mar 2025)Led end-to-end AI implementation and developed the AI Policy Builder for nonprofits, improving workflow efficiency by 40%.

AI Architect | AI Product Manager | Data Analyst

GRC — Virginia, USA (Jan 2021 – Feb 2025)Directed enterprise AI projects — implemented donor analytics and e-commerce AI boosting engagement and sales.

AI Product Specialist | Tech Consultant

Elitesford — Canada (Mar 2022 – Dec 2023)Built an AI-powered education platform integrating metaverse learning and adaptive content systems.

Data Analyst | Tech Consultant

SGR — Remote (2021 – 2022)Developed adaptive AI learning models and translated technical systems into business impact.

Data Scientist

Nash FintechX — Virginia, USA (Mar 2020 – Jun 2022)Enhanced lead generation by 25% through predictive data analytics and marketing optimization.

Tech Consultant & Data Analyst

Halal Commerce — Toronto, Canada (Mar 2019 – Nov 2021)Automated data scraping systems, expanding product databases by 200% and reducing update time by 50%.

Founder & Managing Director

Medical Data Company — Islamabad, Pakistan (Jan 2018 – Mar 2021)Founded a digital health startup improving EHR access and patient data management efficiency by 50%.

Trainer Experience

Halal E-Commerce Platform (Canada)

— Guided founder from concept to launch.

Recruitment Automation System (KSA)

— Helped design and adopt AI-driven workflows.

UNDP Entrepreneurship Program (Pakistan)

— Trained 90+ entrepreneurs in modeling and execution.

AI Policy Builder (USA)

— Mentored compliance and roadmap structure.

Member and Donor IQ, Data Analytics Product Startup (USA)

— Supported strategy and product-market alignment.

Data Visualization Society (USA)

— Mentor for Data Career Mentees

My Portfolio

My Blog

The Billion-Dollar Question: Is Your Bank Overpaying for AI? Why GPU-Free AI Agents Are the Future of Finance

In the high-stakes world of banking and finance, every penny counts, and speed is paramount. Yet, an invisible money pit is quietly draining resources from even the most forward-thinking institutions, the seemingly indispensable GPU. While some banks boast about their internal AI agents, a critical question is emerging: Are they unwittingly bleeding cash on GPU infrastructure when a more cost-effective, equally powerful alternative exists? The answer is a resounding YES, and the solution lies in the rise of GPU-free AI for inference, poised to revolutionize how financial institutions deploy intelligent agents, slashing operational costs without sacrificing performance or compliance.

The Brief Breakdown:

It’s a common misconception that AI equals GPUs. For years, the computational muscle required to train complex AI models, especially large language models (LLMs) and deep learning networks, has undeniably resided in Graphics Processing Units. Their parallel processing capabilities make them ideal for crunching massive datasets and iteratively refining model parameters.

However, the picture changes dramatically when we talk about AI inference. Inference is the process of putting a trained AI model to work – using it to make predictions, analyze data, or power decisions in real-time. Think of it as the difference between building a car (training) and driving it (inference). While building a high-performance car requires specialized tools and heavy machinery, driving it for everyday tasks doesn’t.

This is where the financial sector’s current GPU dependency for AI agents is becoming an unsustainable burden. We’ve seen banks invest heavily in GPU farms for their internal AI agents, often overlooking a critical distinction: most AI agent tasks in banking are inference-heavy, not training-heavy.

The Unseen Costs of GPU Overkill in Banking:

- Astronomical Hardware Costs: High-end GPUs for AI are notoriously expensive. A single professional-grade GPU can cost tens of thousands of dollars. Multiply that by the dozens, if not hundreds, of units a bank might deploy for its AI initiatives, and the CapEx quickly becomes eye-watering.

- Power Consumption and Cooling Nightmares: GPUs are power hogs. Running them 24/7 generates immense heat, requiring sophisticated and equally expensive cooling systems. This translates directly into skyrocketing electricity bills and increased carbon footprint, a concern for environmentally conscious institutions.

- Underutilization and Idle Assets: The brutal truth is that many GPUs purchased for AI in banks sit idle for significant periods, or are underutilized for inference tasks that don’t demand their full processing power. This is a wasted investment, akin to buying a Formula 1 car for city driving.

- Scaling Headaches: Scaling GPU infrastructure is complex and capital-intensive. Adding more GPUs means more space, more power, more cooling, and more specialized IT personnel to manage it all.

The Real Solution: Unleashing the Power of CPU-Based AI Agents

The paradigm shift is happening now. Advances in CPU architecture and, crucially, massive leaps in AI model optimization are making CPU-only inference not just possible, but preferable for a vast majority of financial AI agent use cases.

Here’s why GPU-free AI agents are the game-changer for banking and finance:

- Massive Cost Reduction:

- Hardware Savings: CPUs are significantly cheaper to acquire than GPUs. Banks can leverage existing server infrastructure, dramatically extending the lifespan and utility of their current assets.

- Energy Efficiency: Modern CPUs are far more power-efficient for inference tasks than GPUs. This translates into substantially lower electricity bills and reduced cooling requirements, directly impacting the bottom line.

- Reduced Cloud Spend: For banks relying on cloud-based AI, opting for CPU-optimized inference instances can slash monthly cloud expenses, which are often heavily weighted by GPU utilization fees.

2. Unmatched Ubiquity and Scalability:

- Leverage Existing Infrastructure: Every server in a bank’s data center, every branch office’s local server, and even desktop machines in some cases, are powered by CPUs. This ubiquity means AI agents can be deployed almost anywhere, instantly expanding reach and reducing deployment friction.

- Simplified Scaling: Scaling CPU-based inference is often as simple as provisioning more virtual machines or adding commodity servers, avoiding the specialized logistical challenges of GPU expansion.

3. Pioneering Software Optimization for Inference:

- Quantization: This groundbreaking technique allows AI models to run with lower numerical precision (e.g., 8-bit integers instead of 32-bit floating points) with minimal accuracy loss. The result? Dramatically smaller models that execute faster on CPUs.

- Pruning and Sparsity: AI models can be “thinned” by removing redundant connections or weights, making them more efficient for CPU processing without compromising performance.

- Optimized Libraries and Frameworks: Companies like Intel (with OpenVINO) and AMD (with ROCm), along with open-source communities, are pouring resources into developing highly optimized software libraries and frameworks that make CPU inference blazing fast for many AI models. This means developers can write code that seamlessly leverages CPU power for AI.

- On-Device/Edge Deployment: For AI agents requiring near-instantaneous responses, like fraud detection at the point of sale or personalized customer service on a mobile app, CPU-based edge AI eliminates network latency and keeps sensitive financial data on-device, enhancing security and privacy.

Real-World Impact and Use Cases: Cutting the AI Bill

The shift to GPU-free AI inference translates directly into tangible cost savings across various sectors:

Companies like Ampere Computing are at the forefront, advocating for CPU-centric approaches to AI inference, highlighting the energy efficiency and cost advantages, particularly as models become more specialized and refined for specific tasks rather than requiring a “supercomputer” for every prediction. Intel and VMware are also collaborating to enable scalable and efficient AI operations on CPU-driven infrastructure, even for tasks like LLM inference, by leveraging technologies like Intel’s Advanced Matrix Extensions (AMX).

Imagine AI agents deployed across a bank, performing critical tasks without the GPU overhead:

Leading the charge, institutions like Bank of America with its AI assistant Erica, and Capital One with Eno are processing millions of customer interactions daily. These sophisticated virtual assistants exemplify the massive scale of AI inference workloads in finance, from answering balance inquiries and tracking spending to flagging potential fraud and providing personalized financial insights. Each of these interactions, while seemingly simple, represents a complex AI decision point.

For an AI agent like Erica or Eno, optimizing their underlying models for CPU-based inference means that every single customer query or proactive alert can be processed at a significantly lower operational cost. Multiply that by billions of interactions annually, and the savings from shedding expensive GPU reliance become monumental, directly impacting the bank’s bottom line.

Specifically, let’s look at key areas where GPU-free AI agents deliver:

- Customer Service Bots (Chatbots/Voicebots): Handling millions of customer queries daily. Each interaction is an inference. CPU-powered bots mean lower operational costs per interaction.

- Fraud Detection: AI agents constantly analyze transaction streams for anomalies. For every single transaction, this is an inference task. Running this on optimized CPUs offers real-time detection without the massive GPU bill.

- Automated Document Processing (KYC/AML): Analyzing vast numbers of identity documents, loan applications, or regulatory filings. OCR and NLP models for these tasks are highly optimizable for CPU inference.

- Credit Scoring & Loan Underwriting: Rapidly assessing creditworthiness based on numerous data points.

- Risk Management & Compliance Monitoring: Continuously scanning market data, regulatory updates, and internal logs for potential risks or non-compliance.

- Personalized Banking Recommendations: Delivering tailored product suggestions to customers based on their financial behavior.

These are not future aspirations; these are capabilities being deployed today by forward-thinking institutions who have recognized the GPU inference trap. They are embracing the power of optimized, CPU-driven AI.

The Path Forward for Financial Institutions:

- Audit Your AI Workloads: Understand which AI tasks are true training workloads (where GPUs might still be essential) versus inference workloads. The vast majority of live AI agent deployments fall into the latter.

- Embrace Model Optimization: Invest in data scientists and MLOps teams skilled in techniques like quantization, pruning, and model compression for CPU deployment.

- Leverage Open-Source and Specialized Libraries: Explore and integrate CPU-optimized AI inference libraries and frameworks.

- Strategic Hardware Procurement: Prioritize powerful general-purpose CPUs and consider vendors that are leading in CPU-based AI acceleration.

- Pilot and Prove: Start with pilot projects for CPU-only AI agents in a controlled environment to demonstrate cost savings and performance gains before a wider rollout.

Conclusion: Beyond the Hype, Towards Sustainable AI

The banking and financial sector stands at a pivotal moment. The allure of AI’s transformative power is undeniable, but the associated costs, particularly from an overreliance on GPUs for inference, can cripple even the most ambitious initiatives. The real challenge is not just adopting AI, but adopting it intelligently and sustainably.

By shifting focus to GPU-free AI agents for inference, banks can unlock unprecedented operational efficiencies, drastically cut costs, and accelerate their digital transformation. This isn’t just about saving money; it’s about building a future where AI is pervasive, powerful, and economically viable, truly solving real-world challenges in a hyper-competitive, regulated industry. The era of GPU-free AI is here, and for financial institutions, ignoring it is a luxury they simply can not afford.

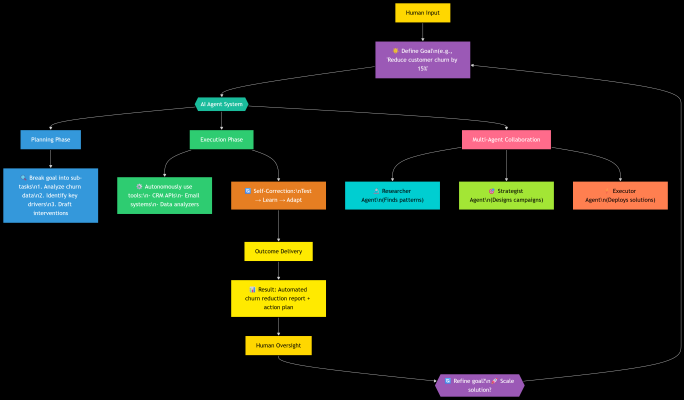

The Agentic Revolution: How AI Is Shifting From Assistant to Actor

What Is an AI Agent?

Imagine asking a colleague to “plan your vacation.” You don’t micromanage them—you trust them to research flights, book hotels, and adjust plans if flights get delayed. An AI Agent works the same way.

Unlike tools like ChatGPT (which responds when prompted), an AI Agent:

- Pursues goals autonomously (“Plan a vacation within $3K”).

- Breaks tasks into steps (find flights → compare hotels → build itinerary).

- Self-corrects (if a hotel is full, it finds alternatives).

- Uses tools (browsers, calculators, APIs).

In short: AI Agents don’t just answer—they act.

How Do They Work?

Think of an AI Agent as a self-driving car for tasks:

- Goal Input: You define the outcome (“Increase Q3 sales by 10%”).

- Planning: The agent creates a step-by-step plan (analyze data → identify trends → draft campaign).

- Execution: It uses tools (Excel, email, CRM) to execute steps.

- Learning: It learns from feedback (“Campaign A failed? Try Campaign B”).

Real-World Analogy:

- ChatGPT = A brilliant intern who needs constant direction.

- AI Agent = A seasoned project manager who runs the show.

Why Now? The Tipping Point.

Three seismic shifts enabled agents:

- Smarter AI: Models like GPT-4 can reason step-by-step.

- Cheaper Computing: Cloud costs fell 80% in 5 years.

- Tool Integration: Agents now use software (Slack, SAP, GitHub) like humans.

Early Examples You’ve Seen:

- DevOps Agents: Auto-fix bugs in your code.

- Customer Service Agents: Resolve returns/refunds end-to-end.

- Personal Agents: Plan your week, book meetings, and track expenses.

The Future – Where Agents Are Heading?

We’re entering the Age of Agentic Ecosystems, where:

Phase 1: Multi-Agent Teams (2025-2027)

Specialized agents collaborate:

- A “Researcher Agent” analyzes market trends.

- A “Creator Agent” drafts marketing content.

- A “Negotiator Agent” liaises with vendors.

Impact: Cut product launch cycles from months → days.

Phase 2: Human-AI Symbiosis (2028-2030)

- You become a “Conductor”:

- Set high-level goals (“Expand into Southeast Asia by 2030”).

- Agents handle execution (market analysis, regulatory compliance, hiring).

- Ethical AIs: Agents debate trade-offs (“Speed vs. sustainability?”) before acting.

Phase 3: The Self-Improving Ecosystem (2030+)

- Agents build better agents:

- Identify inefficiencies → redesign workflows → deploy upgraded teammates.

- Real-World Impact:

- Healthcare: Agent swarms simulate 100K drug interactions overnight.

- Climate: Agents balance grid demand/renewables across continents.

Why This Changes Everything for You:

- For Professionals:

- Your value shifts from task execution → outcome leadership.

- Upskill in: goal-setting, AI oversight, and ethical guardrails.

- For Businesses:

- Compete on agent orchestration speed (not headcount).

- Win markets by running 24/7 R&D, marketing, and ops cycles.

“The factory of the future will have only two employees: a person and a dog. The person’s job is to feed the dog. The dog’s job is to stop the person from touching the machines.” — With AI Agents, this isn’t a joke. It’s a strategy.

Your First Steps with AI Agents:

- Try a Simple Agent: Identify repetitive, rule-based tasks (data cleanup, report generation).

- Spot Pilot Opportunities: Identify repetitive, rule-based tasks (data cleanup, report generation).

- Join the Conversation: Follow frameworks like Microsoft’s, AutoGen or CrewAI.

AI Agents won’t replace humans—they’ll redefine our potential. The most successful leaders won’t fear autonomy; they’ll harness it to solve problems we once thought impossible.

“We spent 50 years teaching machines to think. Now, we teach them to do.”

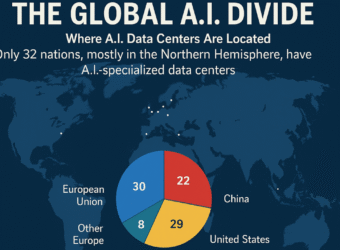

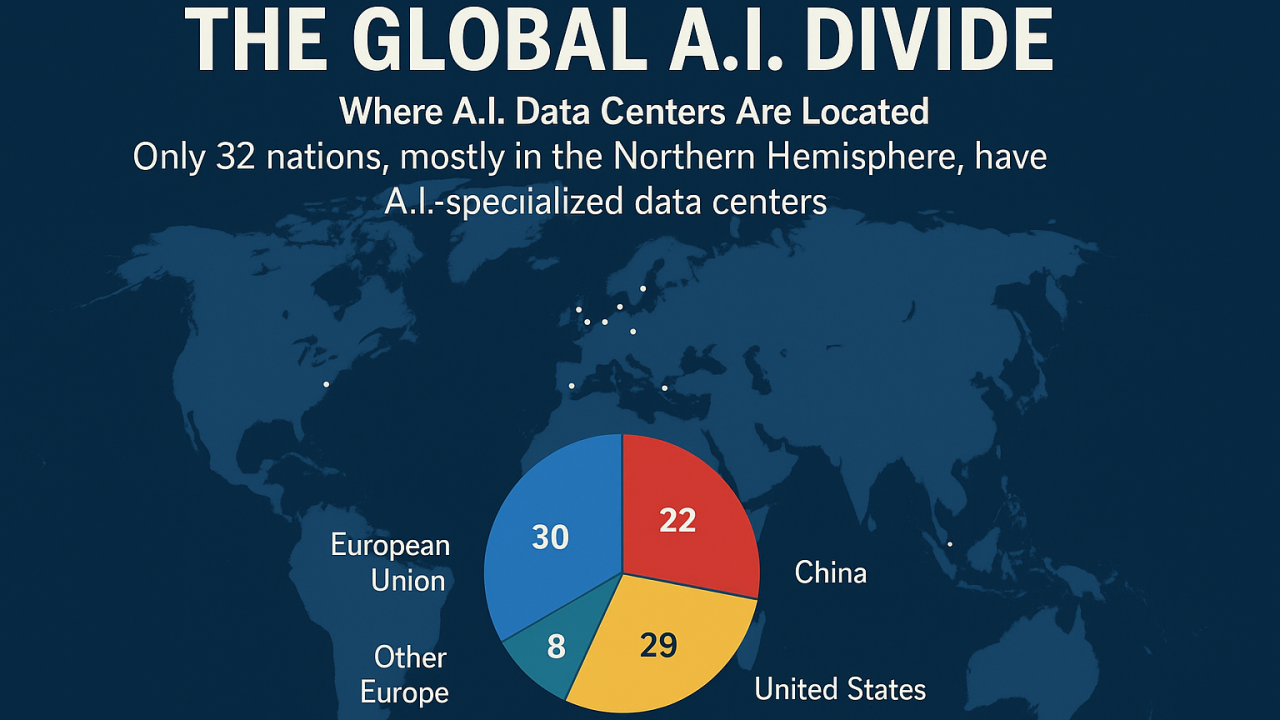

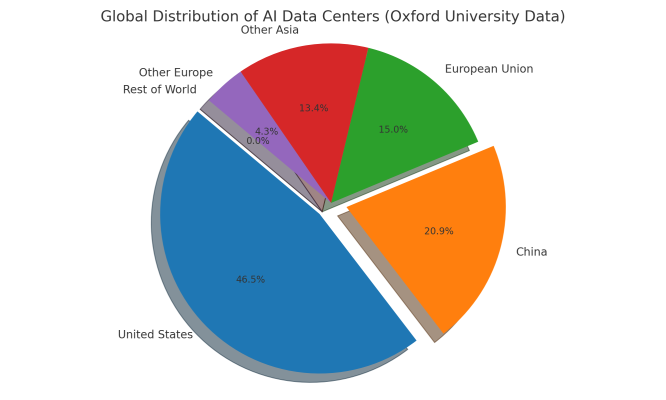

🌍 The Global AI Divide: A Wake-Up Call—With Saudi Arabia Joining the Race

As artificial intelligence transforms everything from healthcare and scientific discovery to national security and digital sovereignty, a clear truth has emerged: the world is dividing into two groups—those with AI compute power, and those without.

💡 A New Digital Frontier

Only 32 countries, mostly in the Northern Hemisphere, currently host AI-specialized data centers—critical infrastructure for training large-scale models, supporting local innovation, and enabling scientific discovery. Over 150 countries, spanning much of Africa and South America, are completely excluded from this landscape.

“We are losing,” says Nicolás Wolovick, an Argentinian researcher operating a small-scale AI lab in a converted classroom—an echo of the struggles faced across the Global South.

The result? An AI-powered compute divide, widening disparities in research capability, economic opportunity, and cultural representation.

🇺🇸🇨🇳 Compute Superpowers—And the Rest

The U.S. and China dominate global AI infrastructure, controlling over 90% of AI data centers, with companies such as Microsoft, Google, Amazon, Tencent, and Alibaba leading the expansion efforts. These giants also control access to Nvidia GPUs, the gold standard for cutting-edge AI. Countries lacking local computing capabilities must rely on distant cloud services, a costly workaround that introduces latency issues, legal complexities, and data sovereignty risks.

🇸🇦 Saudi Arabia Enters the Arena

Saudi Arabia is making a bold leap into the AI computing space:

- Humain, launched in May 2025 under the Public Investment Fund and led by Crown Prince Mohammed bin Salman, aims to develop a world-class AI infrastructure and handle 7% of global AI workloads by 2030

- Massive deals totaling over $23 billion have been secured with industry leaders:

- Public-private projects include initiatives supporting local innovation ecosystems through events like LEAP and DeepFest—Saudi-funded forums that highlight AI talent, policy, and future trends.

These efforts mark a significant shift: Saudi Arabia is moving from a consumer to a creator of AI infrastructure, emphasizing compute sovereignty and global competitiveness.

📉 What’s at Stake

Without compute parity, countries face many risks:

- Innovation bottlenecks: No compute → limited research and development capacity.

- Startup constraints: Local ventures hindered by infrastructure gaps.

- Brain drain: Talent moves to compute-rich regions.

- Model bias: AI models trained mainly on dominant languages and contexts overlook global perspectives.

- Geopolitical vulnerability: Compute-dependent nations are vulnerable to foreign tech control.

Saudi Arabia’s strategy—massive investment, green energy integration, and multi-partner contracts—could serve as a model for other emerging economies aiming for AI parity.

🚨 The Call to Action

Bridging the compute gap requires bold, coordinated efforts:

- International coalitions to support computer deployment in underserved areas.

- Partnerships between governments, sovereign wealth funds, and hyperscalers.

- Talent pipelines through AI-focused education, training, and events.

- Sovereign compute laws to establish data embassies and legal protections.

- Green compute hubs powered by renewable energy, exemplified by Saudi’s NEOM and Oxagon projects.

AI leadership must be shared—it cannot be siloed. The rise of Saudi Arabia’s compute infrastructure via Humain marks a turning point. For AI to stay a global, inclusive movement, nations must invest in infrastructure, partnerships, and policies to distribute compute power fairly.

Let’s support a future where compute power is a right, not a privilege—and where innovation thrives everywhere. 🌍🚀

Contact With Me

Nevine Acotanza

Chief Operating OfficerI am available for freelance work. Connect with me via and call in to my account.

Phone: +012 345 678 90 Email: admin@example.com