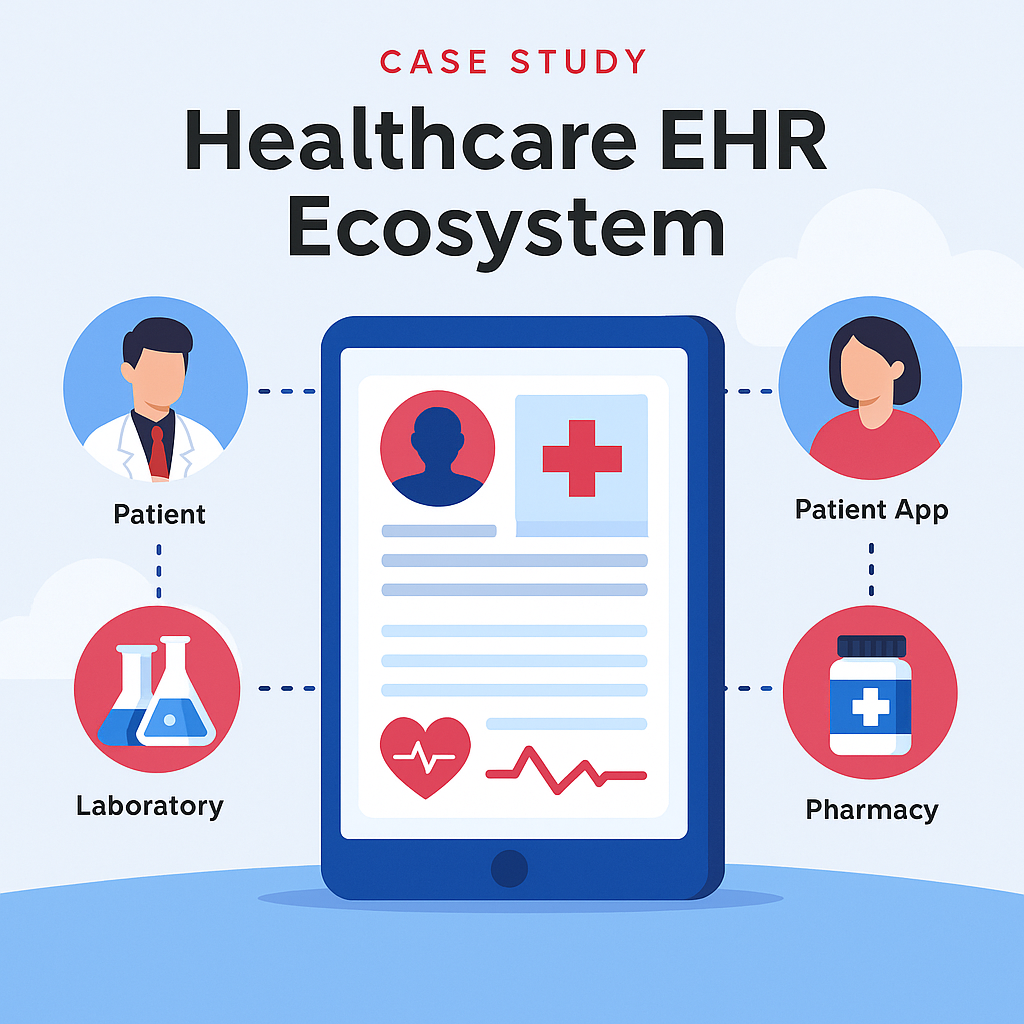

Case Study — Healthcare EHR Ecosystem

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

I throw myself down among the tall grass by the stream as Ilie close to the earth.

I throw myself down among the tall grass by the stream as Ilie close to the earth.

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

Industry: Healthcare & Medical Technology

Services: AI Consulting, Solution Architecture, Data Integration

Engagement: Architecture → Development → Deployment

A healthcare enterprise dedicated to building a comprehensive electronic health record (EHR) ecosystem that securely connects doctors, patients, labs, and pharmacies for seamless healthcare management and research access.

The project focused on developing a centralized EHR platform designed to unify medical data across multiple entities—enabling real-time access, better diagnostics, and improved patient outcomes.

The system integrates AI analytics, interoperability standards, and a secure data exchange framework to streamline medical operations.

Duration: 8–10 months

Technologies: Python, FastAPI, PostgreSQL, Azure Cloud, Power BI, HL7/FHIR APIs, LangChain, OpenAI API

Doctor Portal: Access patient records, prescriptions, and diagnostic history

Patient App: View medical records, appointments, and lab results

Lab Integration: Automated report uploads and result synchronization

Pharmacy Module: Prescription verification and fulfillment tracking

Analytics Dashboard: Real-time insights for care outcomes and research

Data Security: Role-based access, encryption, and audit logging

As the AI Consultant and Solution Architect, I led the end-to-end architecture of the ecosystem, integrating multiple healthcare modules into a single interoperable platform.

I implemented data standardization via HL7/FHIR, designed AI-driven analytics dashboards for patient outcomes, and optimized interoperability for scalability and compliance.

The platform was engineered for high security, modular expansion, and long-term data retention, supporting both clinical operations and academic research.

Industry: E-Commerce & Retail Technology

Services: AI Consulting, Solution Architecture, Product Strategy

Engagement: Concept → Development → Market Launch

Industry: E-Commerce & Retail Technology

Services: AI Consulting, Solution Architecture, Product Strategy

Engagement: Concept → Development → Market Launch

A halal-focused e-commerce startup committed to promoting ethical and Sharia-compliant products through AI-driven product verification, recommendation, and consumer trust systems.

The initiative aimed to develop an AI-powered halal commerce platform that verifies product authenticity, categorizes halal-compliant items, and personalizes the user shopping experience.

The system combined AI classification models, data validation pipelines, and intelligent recommendation engines to ensure accuracy, transparency, and scalability across multiple product categories.

Duration: 6–9 months

Technologies: Python, FastAPI, OpenAI API, LangChain, PostgreSQL, Power BI, AWS Cloud, Streamlit / React

Halal Verification Engine: Uses AI to validate product data against certification standards

Recommendation System: Provides personalized suggestions based on user interests and verified compliance

Smart Search: AI-driven search and product categorization using NLP

Vendor Dashboard: Allows merchants to manage, verify, and update halal certifications

Consumer Interface: Intuitive UI for browsing, purchasing, and reviewing halal-certified products

As the AI Consultant and Solution Architect, I designed the platform’s intelligent backend to automate halal certification validation and product categorization.

I implemented AI classification pipelines to process product metadata, integrated API-based verification with halal authorities, and developed a personalized recommendation engine powered by user behavior analytics.

The result was a trusted, AI-first marketplace that blends compliance with modern user experience.

Industry: Public Sector & Nonprofit Technology

Services: AI Consulting, Product Management, Solution Architecture

Engagement: Ideation → Design → Implementation

Industry: Public Sector & Nonprofit Technology

Services: AI Consulting, Product Management, Solution Architecture

Engagement: Ideation → Design → Implementation

A nonprofit-focused organization seeking to streamline the creation of AI governance policies and ensure responsible AI adoption across donor-funded projects and social-impact initiatives.

The goal was to build an AI Policy Builder platform that empowers nonprofits to craft, customize, and adopt ethical AI policies with ease.

The system guides users through a structured framework of templates, recommendations, and risk assessments—helping organizations establish compliance and transparency.

Duration: 4–6 months

Technologies: Python, FastAPI, PostgreSQL, Streamlit, LangChain, OpenAI API, Azure Cloud, Power BI

Policy Builder Wizard: Step-by-step guidance to create AI policies aligned with global standards

Template Library: Predefined and editable templates for governance, data usage, and ethics

AI Assistant: Suggests best practices based on organization type and AI maturity

Collaboration Tools: Enables multi-user editing and approval workflows

Dashboard: Tracks completion status, version control, and compliance metrics

As the AI Consultant and Product Architect, I led the platform’s full lifecycle—from conceptual framework to technical design and deployment.

I designed the modular policy engine, enabling customization for different NGO types, and integrated an LLM-driven recommendation system to provide context-aware policy suggestions.

The solution combined structured templates with dynamic AI guidance, ensuring nonprofits could easily create compliant and transparent AI policies.

Industry: Agriculture & AgriTech

Services: AI Consulting, Computer Vision, Automation Design

Engagement: Research → Development → Field Testing

Industry: Agriculture & AgriTech

Services: AI Consulting, Computer Vision, Automation Design

Engagement: Research → Development → Field Testing

An agri-tech initiative aiming to enhance crop productivity and reduce manual dependency through AI-driven monitoring, automation, and smart decision-making systems for farmers and agri-businesses.

The project involved developing an AI-powered agriculture automation platform that utilizes computer vision, IoT sensors, and predictive analytics to monitor crop health, detect diseases, and optimize irrigation and fertilizer usage.

The system provided real-time insights, helping farmers make data-backed decisions and improve yield efficiency.

Duration: 6–8 months

Technologies: Python, OpenCV, TensorFlow, Scikit-learn, IoT Sensors, Drone Imagery, Node-RED, AWS Cloud, Power BI

Crop Disease Detection: Identifies plant diseases using image recognition and pattern analysis

Soil & Weather Monitoring: Integrates IoT sensors to analyze soil moisture and weather data

Irrigation Automation: Smart control systems adjust watering schedules based on predictive models

Yield Forecasting: Uses AI to predict crop yield and optimize resource allocation

Farmer Dashboard: Displays real-time insights, alerts, and productivity metrics

As the AI Consultant and Solution Architect, I led the creation of an integrated AI ecosystem combining IoT devices, image analytics, and predictive algorithms.

I designed workflows that linked drone imagery with sensor data, enabling precision farming through actionable intelligence.

The architecture was cloud-based, scalable, and capable of adapting to different crop environments and climates.

Industry: Healthcare & Medical IoT

Services: AI Consulting, Solution Architecture, Product Development

Engagement: Concept → Prototype → Pilot

Industry: Healthcare & Medical IoT

Services: AI Consulting, Solution Architecture, Product Development

Engagement: Concept → Prototype → Pilot

A health-tech startup focused on leveraging AI and IoT to monitor and detect respiratory diseases such as asthma and COPD through real-time spirometry data.

The objective was to develop an AI-powered spirometer system that could measure lung performance, detect early signs of respiratory issues, and transmit data to healthcare providers for continuous monitoring.

The solution combined IoT sensor technology, AI analytics, and cloud integration to create a scalable, connected health ecosystem.

Duration: 5–7 months

Technologies: Python, TensorFlow Lite, Scikit-learn, Node-RED, MQTT, AWS IoT, Firebase, Streamlit, Power BI

IoT Data Capture: Real-time airflow and pressure readings via spirometer sensors

AI Analytics: Machine learning algorithms for anomaly detection and health scoring

Dashboard: Visualize breathing trends, lung capacity, and historical reports

Mobile App Integration: Remote access for patients and doctors

Cloud Sync: Secure data transmission for research and multi-device tracking

As the AI Consultant and Solution Architect, I led the architecture and integration of the end-to-end IoT + AI system.

I developed the data pipeline connecting hardware sensors to AI inference models and implemented predictive algorithms for early disease identification.

The system adhered to healthcare compliance standards (HIPAA-ready) and enabled remote diagnostics through real-time data analytics.

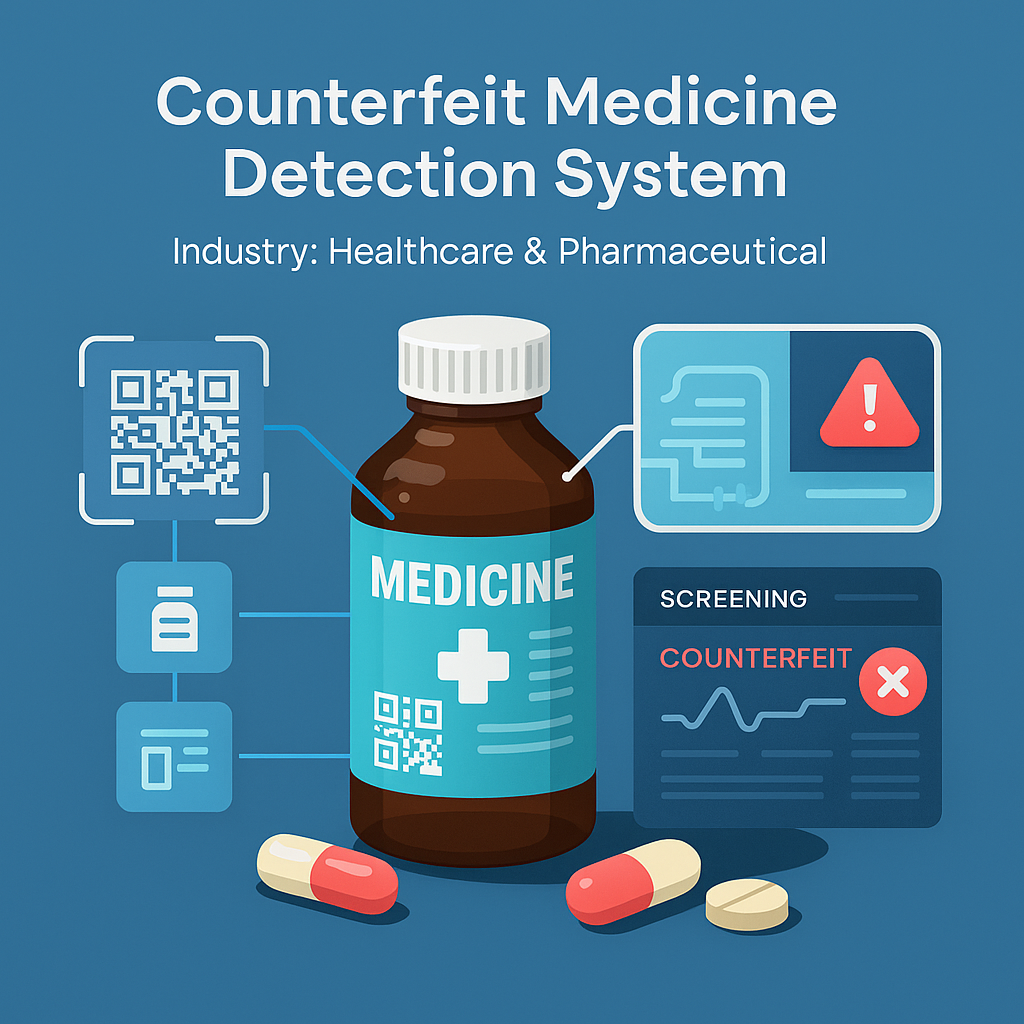

Industry: Healthcare & Pharmaceutical

Services: AI Consulting, Computer Vision, Product Development

Engagement: Research → Prototype → Validation

Industry: Healthcare & Pharmaceutical

Services: AI Consulting, Computer Vision, Product Development

Engagement: Research → Prototype → Validation

A healthcare technology initiative focused on improving drug authenticity verification and public safety by leveraging AI-driven detection and supply-chain transparency.

The project aimed to design an AI-based counterfeit medicine detection system capable of identifying fake pharmaceutical products before they reach patients.

The platform analyzed packaging, labeling, QR codes, and chemical patterns using AI and computer vision to ensure authenticity during distribution.

Duration: 6–8 months

Technologies: Python, TensorFlow / PyTorch, OpenCV, YOLOv5, FastAPI, AWS S3, PostgreSQL, Pandas, NumPy

Image Analysis: Detect inconsistencies in labels, texture, and holograms using deep learning

Barcode & QR Validation: Verify serialization and product metadata against trusted databases

Supply Chain Integration: Real-time verification for pharmacies and distributors

Dashboard: Display analytics, detection reports, and risk scores

Mobile Interface: Allow on-the-spot verification via smartphone camera

As the AI Solution Architect and Consultant, I designed and implemented the entire detection pipeline — from image preprocessing to deep learning classification.

Using YOLOv5 for object detection and CNN-based models for feature validation, the system achieved high precision in distinguishing authentic vs counterfeit medicines.

The architecture was modular, scalable, and cloud-hosted, ensuring fast inference and easy integration with pharmacy systems.

Gained a strong foundation in programming, algorithms, databases, and software engineering with a focus on applied computing and innovation.

Certified Entrepreneur – Causation Business Model. Learned startup development, innovation, and strategic business planning through real-world entrepreneurial frameworks.

Hands-on professional training in applied AI, data preprocessing, model building, and deployment of machine learning solution

Studied strategy, finance, and operations with a focus on the effectuation business model for startups and growth ventures.

Certified in data analysis, visualization, and insights generation using spreadsheets, SQL, and data storytelling techniques.

Completed Effectuation: Lessons from Expert Entrepreneurs — exploring decision frameworks used by top entrepreneurs globally.

Selected as a Fellow & Scholar in Applied Data and AI Innovation, focusing on data equity, AI ethics, and product scalability.

Recognized for leadership in entrepreneurship and innovation—demonstrating expertise in business design, product execution, and AI-driven growth.

Defined and delivered AI solutions, including semantic search and AI chatbots for banking and enterprise automation.

Worked as an AI Solution Architect on the Counterfeit Medicine Detection System, designing AI pipelines to identify fake pharmaceuticals with 95% accuracy. Mentored global fellows in AI innovation, data equity, and applied research projects.

Led end-to-end AI implementation and developed the AI Policy Builder for nonprofits, improving workflow efficiency by 40%.

Directed enterprise AI projects — implemented donor analytics and e-commerce AI boosting engagement and sales.

Built an AI-powered education platform integrating metaverse learning and adaptive content systems.

Developed adaptive AI learning models and translated technical systems into business impact.

Enhanced lead generation by 25% through predictive data analytics and marketing optimization.

Automated data scraping systems, expanding product databases by 200% and reducing update time by 50%.

Founded a digital health startup improving EHR access and patient data management efficiency by 50%.

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

1 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

2 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

8 Plugins/Extensions

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

1 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

2 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

8 Plugins/Extensions

All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary

10 Page with Elementor

Design Customization

Responsive Design

Content Upload

Design Customization

20 Plugins/Extensions

Multipage Elementor

Design Figma

MAintaine Design

Content Upload

Design With XD

100 Plugins/Extensions

Imagine your team wants an AI assistant that can pull data from documents, interact with your apps, and automate tasks – all without custom-coding every integration. The Model Context Protocol (MCP) offers a way to make this happen. MCP is an open standard that lets AI agents plug into tools and data sources like a universal adaptor. Think of MCP like a USB-C port for AI applications – it provides a standardized way to connect AI models to different data and tools. In this article, we’ll walk through 9 steps to build an MCP-powered AI agent from scratch, blending a real-world narrative with technical how-to. Whether you’re a developer or a product manager, you’ll see how to go from a bright idea to a working AI agent that can actually do things in the real world.

In short: MCP replaces one-off hacks with a unified, real-time protocol built for autonomous agents. This means instead of writing custom code for each tool, your AI agent can use a single protocol to access many resources and services on demand. Let’s dive into the step-by-step journey.

Every successful project starts with a clear goal definition. In this step, gather your technical and business stakeholders to answer: What do we want the AI agent to do? Be specific about the use cases and the value. For example, imagine Lucy, a product manager, and Ray, a developer, want an AI assistant to help with daily operations. They list goals like:

Defining the scope helps align expectations. A focused agent (say, an “AI Project Assistant”) is easier to build than a do-everything agent. At this stage, involve business stakeholders to prioritize capabilities that offer real ROI. Keep the scope realistic for a first version; you can always expand later.

Deliverable: Write a short Agent Charter that outlines the agent’s purpose, users, and key tasks. This will guide all subsequent steps.

With goals in mind, identify what capabilities the agent needs and which tools or data sources will provide them. In MCP terms, these external connections will be MCP servers offering tools and resources to the AI agent. Make a list of required integrations, for example:

For each needed function, decide if an existing service or API can fulfill it, or if you’ll build a custom tool. MCP is all about standardizing these connections: you might find pre-built MCP servers for common services (file systems, GitHub, Slack, databases, etc.), or you may implement custom ones. The good news is that as MCP gains adoption, a marketplace of ready connectors is emerging (for example, directories like mcpmarket.com host plug-and-play MCP servers for many apps). Reusing an existing connector can save time.

Tool Design Tip: Don’t overload your agent with too many granular tools. MCP best practices suggest offering a few well-designed tools optimized for your agent’s specific goals. For instance, instead of separate tools for “search document by title” and “search by content”, one search_documents tool with flexible parameters might suffice. Aim for tools that are intuitive for the AI to use based on their description.

By the end of this planning step, you should have a clear mapping of capabilities → tools/data. For our example, Lucy and Ray decide the agent needs:

This planning sets the stage for development. Now it’s time to prepare the data and context that the agent will use.

One key capability in many AI agents is retrieving relevant information on the fly. This is often achieved through Retrieval-Augmented Generation (RAG), where the agent fetches reference data (e.g. documents, knowledge base entries) to ground its answers. Here, vector databases and embeddings come into play.

Embeddings are numerical representations of text (or other data) that capture semantic meaning. Essentially, an embedding model turns a piece of text into a list of numbers (a vector) such that similar texts map to nearby vectors in a high-dimensional space. In practical terms, if two documents talk about similar topics, their embeddings will be mathematically close, enabling the AI to find relevant content by semantic similarity. For example, an embedding model encodes data into vectors that capture the data’s meaning and context, so we can find similar items by finding neighboring vectors.

A vector database stores these embeddings and provides fast search by vector similarity. You can imagine it as a specialized search engine: you input an embedding (e.g. for a user’s query) and it returns the most similar stored embeddings (e.g. paragraphs from documents), often using techniques like nearest-neighbor search. This allows the agent to pull in relevant snippets of information beyond what’s in its prompt or training data, greatly enhancing its knowledge.

For our project, Ray sets up a small pipeline to ingest the company’s internal documents into a vector DB:

Here’s a pseudocode example of how this might look:

# Pseudocode: Prepare vector database

documents = load_all_internal_docs() # your data source

embeddings = [embedding_model.embed(doc.text) for doc in documents]

vector_db.store(items=documents, vectors=embeddings)

# Later, for a query:

query = "What were last quarter's sales in region X?"

q_vector = embedding_model.embed(query)

results = vector_db.find_similar(q_vector, top_k=3)

for res in results:

print(res.text_snippet) # relevant content the agent can use in its answer

Now the agent has a knowledge resource: it can query this vector DB to get facts and figures when needed. In MCP terms, this vector database will likely be exposed as a resource or a tool on an MCP server (more on that in a moment). In fact, using MCP for retrieval is a powerful pattern: MCP can connect to a vector database through a server action, letting an agent perform a semantic search on demand. This means our agent doesn’t need all knowledge upfront in its prompt – it can call a “search” tool to query the vector DB whenever the user asks a question requiring external info.

Before coding the agent, Lucy ensures that the business side (e.g. privacy, compliance) is okay with storing and accessing this data. With green light given, the vector store is ready and filled with up-to-date knowledge for the AI to draw upon.

Now it’s time to get hands-on with MCP (Model Context Protocol) itself. At its core, MCP has a client-server architecture. The AI agent (host) uses an MCP client to communicate with one or more MCP servers. Each MCP server provides a set of tools, resources, or prompts that the agent can use.

In our scenario:

First, decide on the development stack and environment:

MCP’s architecture is straightforward once you see it: the agent doesn’t call tool APIs directly; instead, it sends a structured request to an MCP server which then translates it to the actual action (be it a database query or API call) This decoupling means the agent doesn’t need to know the low-level details – it just knows the name of the tool and what it’s for. The server advertises these capabilities so the agent can discover them. MCP ensures all communication follows a consistent JSON-based schema, so even if the agent connects to a new tool it’s never seen, it can understand how to use it.

Key Concepts:

With environment set up, Lucy and Ray have the MCP groundwork ready. Next, they’ll create the specific tools and resources on the MCP servers to fulfill the agent’s needs.

This is the core development step: building the MCP server(s) that expose the functionalities we planned. If you found a pre-built MCP server for some tool (e.g. an existing “calendar” server or “filesystem” server), you can simply run or adapt it. But here, we’ll assume you’re making custom integrations from scratch to see how it’s done.

a. Creating an MCP Server: An MCP server is essentially an application (could be a simple script or web service) that defines a set of actions (tools/resources) and handles requests for them. For instance, to implement our Document Search capability, Ray creates a server (let’s call it “KnowledgeServer”) with a tool or resource named search_docs. The server code will roughly:

b. Tool Registration: Once the server logic is ready, you “register” the tools so that an MCP client can discover them. In practice, if using an MCP SDK, this might mean adding the tool definitions to the server object. Many MCP frameworks will automatically share the tool list when the agent connects (this is known as capability discovery). For example, the server might implement a method to list its tools; when the agent connects, it fetches this list so the AI knows what’s available. In code, this can be as simple as adding each tool to the server with its handler function. If you’re writing servers in Node or Python, you might use an SDK function to register a tool, providing its name, input schema, and a function callback to execute.

c. Handling Resources: If some data is better exposed as a read-only resource (for instance, a static database or a subscription feed), MCP supports that too. In our case, we could treat the vector DB as a resource. The server would expose it such that the agent can query or subscribe to updates. The difference is mostly semantic – tools vs resources – but resources might be listed separately in something like the MCP Inspector interface (which has a Resources tab).

d. Security and permissions: At this point, consider what each tool is allowed to do. MCP servers often run with certain credentials (API keys, database access) and you might not want to expose every function to the agent. Implement permission checks or scopes if needed. For example, ensure send_email can only email internal domains, or the search_docs can only access non-confidential docs. MCP encourages scoped, narrowly-permissioned servers to avoid over-privileged agents.

By the end of Step 5, you have one or more MCP servers implemented with the necessary tools/resources. They’re essentially adapters: converting the AI’s requests into real actions and then returning results. For instance, our KnowledgeServer takes an AI query like “Find Q3 sales for Product A” and translates it into a database lookup or vector DB search, then gives the answer back to the AI.

Before unleashing the whole agent, it’s wise to test these servers in isolation. This is where the next step – using the MCP Inspector – becomes invaluable.

Even the best plan needs testing. The MCP Inspector is a developer tool that provides an interactive UI to load your MCP server and poke at it to ensure everything works correctly. Think of it as a combination of API tester and live debugger for MCP.

Ray fires up the MCP Inspector for the KnowledgeServer:

Using the Inspector, Lucy and Ray iteratively refine the servers:

By the end of testing, the MCP servers are robust and ready. Importantly, this step gave the confidence that each piece works in isolation. It’s much easier to troubleshoot issues here than when the AI is in the loop, because you can directly see what the server is doing. As a best practice, treat the Inspector as your friend during development – it significantly speeds up debugging of MCP integrations.

Now for the fun part: bringing the AI brain into the picture and configuring the agent application. At this stage, we have:

What we need now is the actual AI agent logic that will use a Large Language Model (LLM) to interpret user requests, decide which tools to call, and compose responses. This typically involves using an AI model (like GPT-4, Claude, etc.) and an agent orchestration framework or prompt strategy (for example, a ReAct prompt that allows the model to reason and choose tools).

a. Building the Agent’s Brain: The agent is essentially an LLM with an added ability to use tools. Many frameworks (LangChain, OpenAI function calling, etc.) exist for this, but MCP can work with any as long as you connect the MCP client properly. If using the OpenAI Agents SDK (hypothetically), one might configure an Agent and pass in the MCP servers as resources. In code, it could look like:

llm = load_your_llm_model() # e.g. an API wrapper for GPT-4

agent = Agent(

llm=llm,

tools=[], # could also include non-MCP tools if any

mcp_servers=[knowledge_server, productivity_server] # attach our MCP servers

)

When this agent runs, it will automatically call list_tools() on each attached MCP server to learn what tools are available. So the agent might get a list like: [search_docs, schedule_meeting, send_email] with their descriptions. The agent’s prompt (which you craft) should instruct it to use these tools when appropriate. For example, you might use a prompt that says: “You are a helpful assistant with access to the following tools: [tool list]. When needed, you can use them in the format: ToolName(inputs).” Modern LLMs can follow such instructions and output a structured call (like JSON or a special format) indicating the tool use.

b. Agent App Configuration: Beyond the LLM and tool hookup, consider the app environment:

c. Testing the Integrated Agent: Before deploying, try some end-to-end queries in a controlled setting. For example:

If any of these fail, you may need to refine the prompt or provide more examples to the model on how to use the tools (few-shot examples in the system prompt can help). This is a bit of an art – effectively prompt engineering and agent coaching. But once it’s working, you truly have an AI agent that’s context-aware, meaning it can fetch real data and take actions, not just chat generically.

Lucy and Ray can now see their creation in action: the AI assistant responds to questions with actual data from their knowledge base, and can perform tasks like scheduling meetings. The gap between AI and real-world action is being bridged by MCP.

Having a prototype running on a developer’s machine is great, but to be useful, it needs to be accessible to users (which could be internal team members or external customers). Deployment involves making both the agent application and the MCP servers available in a reliable, scalable way.

Key considerations for deployment:

During deployment, also consider failure modes. What if a tool fails (e.g., email API down) – does the agent handle it gracefully (perhaps apologizing to user and logging the error)? It’s wise to implement fallback responses or at least error messages that make sense to the end-user, rather than exposing technical details. MCP servers typically handle errors by returning structured error responses that the client (agent) can interpret, so make sure to propagate those to the user in a friendly way.

With everything deployed, your AI agent is now in the wild, working across the systems you connected. It’s time to look at the bigger picture and future expansion.

The final step is an ongoing one: scaling and evolving your MCP-based AI agent across the organization and to new use cases. This is where the true payoff of MCP’s standardized approach becomes evident.

Here are ways to scale and expand:

Scaling is not just about tech; it’s about organizational adoption. Lucy can champion how the AI agent saves everyone time, turning skeptics into supporters. With robust MCP-based infrastructure, the team can confidently say yes to new feature requests because the modular architecture handles growth gracefully. Instead of a monolithic AI system that’s hard to change, you have a Lego set of AI tools – adding a new piece is straightforward without breaking others.

In this journey, we saw how a team can go from a simple idea – “let’s have an AI assistant that actually does things” – to a working agent powered by the Model Context Protocol. We followed a 9-step framework: from clearly defining goals and planning capabilities, through building the data foundation with vector embeddings, setting up the MCP environment, implementing and registering tools/resources, testing with the MCP Inspector, wiring up the AI model, and finally deploying and scaling the solution. Throughout, we used a narrative example to make it tangible how each step might look in practice.

The result is an AI agent that is more than just a chatty assistant – it’s action-oriented and context-aware. By leveraging MCP’s open standard, our agent can seamlessly connect to various services and data sources in real time, which is a big leap from traditional isolated AI models. Instead of custom code for every integration, MCP gave us a plug-and-play architecture where the focus was on what the agent should do, not how to wire it all up.

For developers, MCP offers a flexible framework to build complex workflows on top of LLMs, while ensuring compatibility and structured interactions. For business stakeholders, it means AI solutions that can actually operate with live data and systems, accelerating automation and insights. It’s a win-win: faster development and more capable AI agents.

As you consider adopting MCP for your own projects, remember that it’s an open protocol and community-driven effort. There’s a growing ecosystem of tools, SDKs, and pre-built connectors that you can tap into. The story of Lucy and Ray’s agent is just one example – across industries from finance to marketing to operations, the approach is similar. Define the goal, assemble the pieces with MCP, and let your AI agents loose on real-world tasks.

In summary, building an MCP AI agent from scratch may involve many moving parts, but each step is manageable and logical. And the end product is incredibly powerful: an AI that not only understands language, but can take action using the full context of your organization’s knowledge and tools. It’s a glimpse into the future of AI in the enterprise – a future where AI agents are as integrated into our software stack as any microservice or API. Given the momentum behind MCP (with companies like Anthropic, Microsoft, and others championing it), now is a great time to start building with this new “USB-C for AI.” Your team’s next big AI idea might be closer to reality than you think.

What use case would you build first if you had your own MCP-powered AI agent?

Do you believe MCP will become the standard interface layer for enterprise AI agents — or is there another protocol you’re betting on?

In the past few days, everyone is talking about HUMAIN Colleagues, friends – even non-tech folks – keep asking me “What exactly is HUMAIN?” As an AI professional in Saudi Arabia, I’ve decided to write this in-depth article to explain what HUMAIN is all about. My goal is to make it easy for everyone – from students and CEOs to investors and the general public – to understand this trending initiative that has filled us with excitement and national pride.

Crown Prince Mohammed bin Salman launched HUMAIN in May 2025 as a Public Investment Fund (PIF) company, aiming to position Saudi Arabia as a global AI leader.

HUMAIN is not just another tech startup – it’s a nation-scale AI initiative. Officially launched on May 12, 2025, by Crown Prince Mohammed bin Salman, HUMAIN is a PIF-owned artificial intelligence company with a bold mandate: to drive the Kingdom’s AI strategy and make Saudi Arabia a global hub for AI innovation. This aligns squarely with Vision 2030, Saudi Arabia’s blueprint to diversify the economy beyond oil through technology and innovation.

The launch of HUMAIN was high-profile and symbolic. It was announced during a Saudi-U.S. investment forum in Riyadh, attended by the U.S. President and top tech leaders like Elon Musk, Sam Altman ( OpenAI CEO), Andy Jassy ( Amazon CEO), Jensen Huang ( NVIDIA CEO), and others. In other words, the world was watching as Saudi Arabia declared its AI ambitions. The Kingdom has already been recognized for its commitment – the Global AI Index 2024 ranked Saudi Arabia first in the world for government AI strategy. HUMAIN is the flagship to implement that strategy, “empowering humanity through AI” and placing Saudi at the forefront of the AI race.

This is a source of national pride. It’s inspiring to see Saudi Arabia move from consuming technology to creating it. We’re talking about a country that’s rapidly transforming – and HUMAIN embodies that transformation in the AI domain.

So, what exactly does HUMAIN do? In short: pretty much everything in AI. HUMAIN is designed as a full end-to-end AI value-chain provider, meaning it operates across all layers of AI development – from the core infrastructure up to user-facing applications. According to the official announcements, HUMAIN will provide a “comprehensive range of AI services, products and tools, including next-generation data centers, AI infrastructure and cloud capabilities, and advanced AI models and solutions.” One marquee goal is developing one of the world’s most powerful multimodal Arabic large language models (LLMs)– more on that later.

In practical terms, HUMAIN’s scope covers four key areas:

This end-to-end approach is unique and ambitious. Instead of focusing on just one niche, HUMAIN aims to be a one-stop AI powerhouse – from silicon to software, from data centers to consumer apps. It’s backed directly by the sovereign wealth fund (PIF), meaning it has the capital and strategic support to pursue long-term, big-picture projects that might be too risky for a typical private startup.

Crucially, HUMAIN is meant to serve not just Saudi Arabia, but the region and the world. The company’s mission statement says it wants to enhance human capabilities and unlock new possibilities through the digital economy. By building local capabilities in AI, Saudi Arabia isn’t just importing technology – it’s creating homegrown innovations that can be exported globally. This reflects a shift to a knowledge-based economy, creating high-tech jobs and intellectual property within the Kingdom.

One of the most exciting aspects of HUMAIN – especially for those of us in the Middle East – is its focus on Arabic AI. For years, Arabic speakers (over 400 million people worldwide) and Muslims (around 2 billion people) have been underserved by generative AI tools. Most AI chatbots and content generators are geared toward English or Chinese. HUMAIN is changing that.

The company’s flagship AI model is called ALLAM 34B, a 34-billion-parameter Arabic-first large language model. It’s been described as “the world’s most advanced Arabic-first AI model, fluent in Islamic culture, values and heritage”. In August 2025, HUMAIN launched HUMAIN Chat, a next-generation conversational AI app powered by ALLAM 34B. This is a big deal: it’s the first AI chatbot built in the Arab world, for the Arab world, as a fully bilingual assistant (Arabic and English).

HUMAIN Chat is available on web, iOS, and Android, and it represents a national milestone – a sovereign AI product born in Saudi Arabia. For the first time, people can interact with an AI in their own Arabic dialects and cultural context. The app can understand Arabic queries (including voice input in multiple dialects) and respond with culturally aware answers. Its features include real-time web search (so it always has up-to-date knowledge), seamless switching between Arabic and English in one conversation, and even the ability to share conversations for collaboration. Importantly, all of this is hosted on Saudi infrastructure with full compliance to local data laws, ensuring privacy and sovereignty.

Why is this so inspiring? Because it “closes a historic gap in digital inclusion”. Now, a student in Riyadh or an entrepreneur in Jeddah can use generative AI in Arabic to brainstorm ideas, learn new concepts, or get customer service – without language being a barrier. The AI’s knowledge is not just translated; it’s grounded in our values, heritage, and history. As the HUMAIN CEO Tareq Amin put it during the launch: “We are proving that globally competitive technologies can be rooted in our own language, infrastructure, and values – built in Saudi Arabia by Saudi talent. This is not the end state, but the beginning of a journey… The potential is limitless”. That mix of technical excellence and cultural authenticity is at the heart of HUMAIN’s vision.

From a technical perspective, ALLAM 34B is a milestone in AI development. It was trained on one of the largest Arabic datasets ever assembled, and then refined with input from 600+ domain experts and 250 evaluators across disciplines. According to independent evaluations (by AI firm Cohere on the MMLU benchmark), ALLAM 34B is the most advanced Arabic LLM ever built in the region. And it’s not just Arabic-only – it’s fully bilingual, so it can handle English as well, but with Arabic as its first language. The model was built by a team of over 120 AI specialists (including 35 PhDs), with a 50/50 gender balance, “hosted in Saudi Arabia, by Saudis, with global talent alongside them”. This emphasis on developing local talent (men and women alike) in a cutting-edge field is another aspect of HUMAIN’s impact.

To illustrate what this means, imagine asking a typical AI chatbot about an Arabic poem or a historical event in Islamic history. Many current AI models might struggle or give generic answers. HUMAIN’s ALLAM model, on the other hand, has been deliberately aligned with Islamic, Middle Eastern cultural nuances. It can discuss Al-Mutanabbi’s poetry or explain the significance of Ramadan with a depth and fluency that foreign models lack. For businesses, an Arabic-first AI can better serve local customers; for governments, it can operate with full data sovereignty. This is AI built on our own terms.

HUMAIN Chat is just the first product in what the company calls its “HUMAIN IQ” portfolio – a new generation of AI solutions that marry scientific depth with responsible design. We can expect more to come, perhaps sector-specific AI assistants or advanced analytics tools, all leveraging the ALLAM model and future models. As users engage with HUMAIN Chat, the model will continue to learn and improve. The company has even issued a call to action: for every Arabic speaker to use it, test it, and help shape it into the world’s leading Arabic AI. It’s a collective effort – by using the app, we’re essentially helping to train and refine an AI that represents us.

Building something as grand as HUMAIN requires serious investment and partnerships. And indeed, HUMAIN is backed by multi-billion-dollar deals and collaborations that have made headlines in the tech and business world. This is where the business-focused angle comes in. Saudi Arabia is putting its money (and relationships) where its mouth is to ensure HUMAIN has the best hardware, software, and expertise.

Some of the major partnerships and investments include:

Massive global partnerships (like a $5B AI infrastructure deal with AWS) underscore HUMAIN’s aim to make Saudi Arabia a world-class AI hub. Riyadh’s modern skyline represents the Kingdom’s rapid tech transformation.

From an investment perspective, HUMAIN is fueled by the deep pockets of Public Investment Fund (PIF) and these strategic allies. Crown Prince MBS has often talked about investing today’s oil revenues into technologies of the future – and here we see that in action. The presence of U.S. tech giants also indicates a bridging of ecosystems: Silicon Valley meets Riyadh. For investors and business leaders, this means opportunities in Saudi Arabia’s tech sector are booming: cloud services, chip manufacturing, AI research, and more. It’s no coincidence that these deals were announced during a period when Saudi Arabia pledged hundreds of billions in commitments to U.S. companies, emphasizing win-win growth.

Beyond the tech specs and dollar signs, one must ask: What does HUMAIN mean for Saudi Arabia’s economy and society? The answer: potentially a huge transformation. This is where the business-focused and inspirational tones meet.

Finally, there’s the strategic global positioning. Saudi Arabia, through HUMAIN, is signaling that it wants to be a top-tier producer of AI, not just a consumer. In the geopolitical landscape, AI expertise is a strategic asset. If HUMAIN succeeds, SaudiArabia could become “the third biggest AI provider in the world,” as some officials ambitiously suggest. This means attracting AI conferences, talent, and investments to the Kingdom – essentially making Riyadh a new Silicon Valley for AI in the Middle East. It’s a source of soft power too: leading in AI ethics discussions, contributing Arabic perspectives to global AI development, and building technology that can be exported to friendly nations.

There will, of course, be challenges ahead. Building world-class AI infrastructure and software is complex – it requires not just money but also cutting-edge research and constant innovation. There’s also the need to ensure responsible AI: HUMAIN must balance rapid innovation with ethical considerations, data privacy, and alignment with societal values (something they are mindful of, given the cultural emphasis of their products). Competition is global – other countries and companies are racing in AI too. But the commitment from the highest levels of Saudi leadership and the partnerships with the best in the industry give HUMAIN a strong chance to overcome these challenges.

It’s no surprise that HUMAIN has become the buzzword in tech circles here. The story has all the ingredients of a viral LinkedIn post: visionary leadership, multi-billion-dollar deals, cutting-edge tech, national pride, and a dash of global intrigue. For a long time, the Middle East hasn’t been seen as a creator of high-end tech – HUMAIN flips that narrative, and people are excited.

Think about it: In a single initiative, you have Saudi Arabia building mega-scale AI data centers powered by the latest U.S. chips, launching its own Arabic ChatGPT-like app that immediately serves millions, partnering with companies like Amazon to bring top-tier cloud services locally, and investing heavily in its youth to be AI leaders of tomorrow. It’s hard not to be impressed by the speed and scale of this effort. Only a year ago, HUMAIN didn’t exist; now it’s already rolling out products and pouring concrete for its data centers. That rapid progress creates a sense of “Something big is happening here, and it’s happening fast!”

From an inspirational standpoint, HUMAIN strikes a chord especially with young Saudis. It shows them that the world’s most advanced technologies – AI, supercomputing, generative models – are not only accessible to them but are being built by them. There’s a palpable sense of national pride in seeing our country take such a forefront position. Just as previous generations took pride in oil discoveries or megaprojects like NEOM, today’s generation is proud of achievements in AI and tech. Social media in Saudi Arabia has been abuzz with the HUMAIN Chat launch, with many sharing their first conversations with the Arabic AI and expressing amazement that it understands cultural references so well.

Moreover, HUMAIN is trendy and accessible in how it’s presented. The branding itself – “HUMAIN” (a clever blend of “human” and “AI”) – signals a focus on human-centric AI. The company’s communications stress themes like “enhancing human capabilities” and “AI with cultural depth.” This resonates widely, from policymakers to students, because it frames technology as a tool for empowerment rather than a cold, foreign gadget. On LinkedIn and in the media, HUMAIN is often discussed not in heavy technical jargon but in terms of vision and impact, which helps it go viral.

Lastly, there’s a global context: as nations around the world talk about AI strategy (USA, China, EU, etc.), Saudi Arabia has boldly entered that chat with HUMAIN. For international observers, it’s noteworthy and maybe unexpected, which adds to the intrigue. It’s not every day that you see a new company announce it will build one of the world’s top supercomputers and create a new top-tier language AI practically from scratch. That kind of moonshot ambition is what drives conversations and clicks. And yes, skepticism exists in some corners (“Can they pull it off?”), But even that skepticism keeps HUMAIN in the conversation and pushes its team to prove themselves.

HUMAIN is more than just a company; it’s a statement of intent by Saudi Arabia to lead in the defining technology of our time. In a matter of months, HUMAIN has galvanized the nation’s tech ecosystem, forged global partnerships, and delivered tangible products like the ALLAM-powered chatbot. It exemplifies an approach of think big, start fast, and it carries the weight of a country’s aspirations on its shoulders.

As an AI enthusiast observing this unfold, I find it deeply encouraging. HUMAIN shows that with vision, investment, and talent, no goal is too large – not even competing with the AI giants of the world. It is inspiring Saudi youth (and indeed the wider Arab youth) to dream in tech terms: to pursue careers in AI, to launch startups, to conduct research that pushes boundaries. It is also sending a message globally that Saudi Arabia is open for business in high-tech, ready to collaborate and contribute.

We are at the beginning of this journey. The data centers are being built as we speak, the models will keep improving, and more AI solutions will roll out. In the next year or two, we’ll likely see HUMAIN powering innovations in government services, smart city initiatives, and perhaps offering its AI services to other countries as well. The road ahead will require hard work – training AI models, integrating systems, scaling infrastructure – but the path is set.

For everyone who asked me, “What is HUMAIN?”, I hope this article gave you a clear picture. It’s an exciting time to be in the AI field, especially here in Saudi Arabia. HUMAIN stands at the intersection of technical innovation, economic strategy, and cultural pride. Whether you’re a tech professional, an investor, a student, or just a curious citizen, keep an eye on HUMAIN – it’s a story unfolding in real time, and it represents Saudi Arabia’s bold leap into the AI-driven future.

In the spirit of HUMAIN’s own call to action: let’s all engage with this AI revolution. Try out the Arabic AI chatbot, envision how AI can transform your industry, and most importantly, be part of the conversation. The era of HUMAIN – the human-AI synergy – has begun, and it’s making history in the Kingdom and beyond.

🔥 I’d love to hear your perspective — drop your thoughts in the comments 👇 and let’s shape the future of AI together!

Artificial Intelligence (AI) is no longer a futuristic concept—it is embedded in everyday decision-making, from medical diagnoses to financial transactions, hiring processes, and national security systems. Yet with this power comes risk: algorithmic bias, misinformation, privacy intrusions, and potential misuse in ways that could harm individuals and societies.

That is why AI regulation, governance, and ethics are among the most critical policy discussions of our time. Governments and international organizations are asking: How do we encourage innovation while protecting citizens?

Saudi Arabia, under its Vision 2030 framework, has taken proactive steps to position itself as both a regional leader and a global participant in this debate. The Kingdom has begun drafting AI ethics guidelines, hosting international summits, and building institutions like the Saudi Data and Artificial Intelligence Authority ( SDAIA | سدايا ). While many of these efforts remain advisory rather than binding law, they represent an important trajectory toward shaping AI’s role responsibly.

This article examines Saudi Arabia’s initiatives in AI governance and ethics, before comparing them with approaches in the European Union , United States, China, and other global leaders.

Before diving into Saudi Arabia’s case, it’s important to outline the global backdrop. Several key frameworks guide how nations think about AI governance:

These principles are non-binding, but they heavily influence national strategies. Countries interpret and implement them differently, depending on their governance models, legal systems, and cultural values.

AI is central to Saudi Vision 2030 , which seeks to diversify the economy and build a knowledge-driven society. The National Strategy for Data and AI (NSDAI) was launched in 2020, with the goal of positioning the Kingdom as a top-10 global AI leader by 2030.

To deliver on this ambition, the government established SDAIA | سدايا , tasked with:

This centralized authority is unusual compared to more fragmented approaches elsewhere and gives Saudi Arabia an agile mechanism for steering AI development.

In 2023, SDAIA | سدايا released draft AI Ethics Principles, a framework that outlines high-level values for AI development:

Crucially, Saudi Arabia’s framework adopts a risk-based model, categorizing AI systems as:

For example, AI that exploits vulnerable populations or poses serious risks to human rights would be banned outright. This mirrors the European Union AI Act, showing Saudi Arabia’s alignment with international best practices.

Recognizing the rise of large language models and deepfakes, Saudi Arabia issued two sets of Generative AI Guidelines in 2024—one for public-sector employees and another for general users.

They provide advice on:

While not legally binding, these guidelines represent practical governance tools for a rapidly evolving technology.

At present, Saudi Arabia has no dedicated AI law. The Ethics Principles and Generative AI Guidelines are advisory, not enforceable. Compliance is voluntary, though SDAIA | سدايا has the authority to monitor and encourage adoption. Related laws, such as the Personal Data Protection Law, cover adjacent issues like data privacy.

This “guidance today, regulation tomorrow” approach gives Saudi Arabia flexibility while it studies global developments.

Saudi Arabia is also positioning itself as a global hub for AI governance discussions:

This dual domestic-international strategy allows Saudi Arabia to shape the AI governance narrative while showcasing its commitment to responsible AI.

The European Union is the first jurisdiction to adopt a comprehensive AI law—the EU AI Act. This legislation bans certain practices (like social scoring and exploitative surveillance), heavily regulates high-risk systems, and requires transparency for AI-generated content.

The EU model is precautionary and rights-driven, prioritizing citizen protection even if it slows innovation.

Comparison with Saudi Arabia:

The U.S. lacks a single AI law, instead relying on:

This patchwork allows flexibility but risks inconsistency.

Comparison with Saudi Arabia:

China regulates AI aggressively, especially generative AI. Its 2023 Interim Measures require content to align with socialist values, mandate security assessments, and enforce watermarking of deepfakes.

This ensures government control over AI’s societal impact, but critics see it as prioritizing censorship over innovation.

Comparison with Saudi Arabia:

Saudi Arabia sits between these models: more ambitious than the UAE or Japan in global engagement, but not yet as strict as the EU.

Saudi Arabia has moved quickly from aspiration to action in AI governance. By drafting ethical frameworks, publishing generative AI guidelines, and actively convening international summits, the Kingdom is ensuring it has a seat at the global AI table.

While its guidelines are not yet binding, the foundations are in place for future enforceable regulation that balances innovation with ethics. Importantly, Saudi Arabia’s efforts are not in isolation—they are aligned with OECD, UNESCO, and EU standards, while also introducing cultural and Islamic perspectives.

Globally, AI regulation remains fragmented. The EU leads with binding law, the U.S. prefers flexibility, China enforces strict content rules, and other nations experiment with hybrid approaches. Saudi Arabia’s distinctive contribution is its role as a convener and cultural interpreter, embedding local values into global conversations.

As AI continues to reshape industries and societies, Saudi Arabia’s dual strategy—building a robust domestic framework while driving international dialogue—positions it not just as a participant, but as a shaper of the future of responsible AI.

As Saudi Arabia transitions from ethical guidelines to potential binding AI regulations, how do you think its approach should balance innovation, cultural values, and global alignment—and what lessons can the world learn from this journey?

I am available for freelance work. Connect with me via and call in to my account.

Phone: +01234567890 Email: admin@example.com